The industry drivers (increase in computing power, cloud-storage capacity and usage, and network connectivity) are turning the data deluge into an urgent value proposition for most industries. As the overwhelming information flow (customers’ profiles, sales data, product specifications, process steps, etc) arrives in formats and sources (IoT devices, social media sites, sales systems, and internal- systems), leading companies must establish their ground reality.

What: From general data classification categories (public, internal, confidential) to pinpointing the future use cases (like which AI/ML can exploit data and to what value).

Why: Even before the ‘what,’ the strategic imperative or business growth envisaged from data must be carefully thought through.

Where: Basis the ‘what’ the next level of informed thinking will help teams understand the strategy, architecture, and location of this data.

How: Then comes the mechanics like data identification and tagging, aligning with the organization’s data classification policies, adherence to regulatory requirements, and the daily management activities of data access, correlation, and retention.

Who: This concerns the users, roles, groups, and business units – from establishing the user access protocols and agreeing on the various policies that decide data security, data aggregation, and controls.

When: The last part of the consideration exercise is pertinent to the timing – the readiness needed to design, build, implement and operate a data lake.

While tools such as Microsoft’s Synapse and Purview ease the underlying automation and ETL implementation, data lakes and related data storage and analytics are complex topics.

To begin with, an effective Data Lake is a corporate repository that stores unstructured and structured data, at any scale, on the cloud, on-premises, or hybrid. By implementing such solutions, companies bring in enhanced efficiencies and help identify patterns that unlock new opportunities.

A deeper dive into the ‘what.’

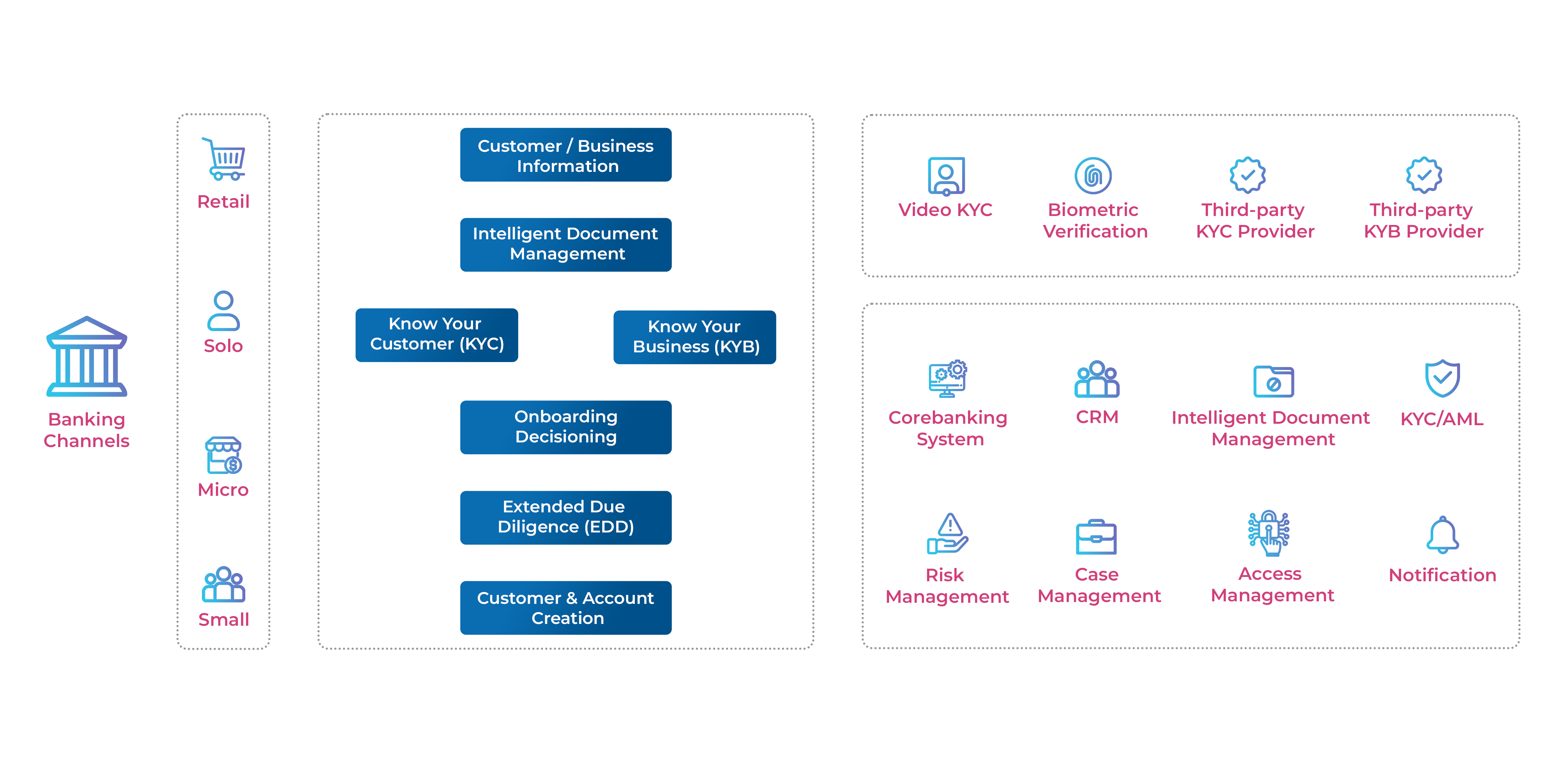

Delving into the “What” at the initial stage throws up exciting possibilities. While corporations working with a range of data across formats (structured, unstructured, semi-structured), it makes sense to implement a data Lake, but if they are working with table-structured information (records included in the CRM or HR systems), more than a data Lake, a data warehouse is a worthwhile investment.

As mentioned above, a deeper dive into the ‘why’ is a must. In this, the implementation roadmap of the data lake must establish the plan to leverage the data (process maps for data analysis, organization, and categorization)

Gauging the Implementation difficulty for Data Lakes.

While bringing new sources into a data lake is effort-intensive, inaccurate planning of continuous data acquisition will lead to serious ETL overhead. Additionally, the data lake processes must be measured for their cost and time trade-offs. If the resource requirements are prohibitive, companies must assess the data warehouse option – something that allows them to store data with minimum cost and then extract and transform the data as and when needed.

Incorporating into the company’s culture.

A vital component of data lake implementation is the smooth transition – from training employees in advance, stage-wise reduction of workloads, being open to learning new skills, embracing a flexible mindset, and inter-departmental cooperation. The nuances are unique as each company culture responds differently to data lake implementation initiatives.

Along with the 5W and 1H checklist of data lake implementation, leading CDOs, CIOs, and CXOs are also aware of the stages a company has to go through while building and integrating data lakes into their tech architectures. Here are four steps described broadly.

- Stage 1 – Landing/Drop zone (creating data lake separate from core IT systems. Stored in raw format, internal data is complemented or enriched by external data sources.

- Stage 2 – Learn Fast (data scientists analyze data lake to build prototypes for analytics programs)

- Stage 3 – Sharing loads (integrating with internal enterprise data warehouses – EDW. More detailed data sets are pushed into the data lake for assessing storage and cost constraints).

- Stage 4 – Forming a part of the core (data lake replaces operational data stores and businesses graduate to data-intensive applications like ML processing. Strong data governance protocols are put in).

Conclusion

In our times of data deluge, as more companies experiment with data lakes, the questions of – harvesting advantages in information streams and storage costs are essential. Like any new technology deployment, a total revamp of existing systems, processes, and governance models is necessary. An agile planning approach is sure to bring an inevitable readiness regarding business capabilities, security protocols, talent pools, and integration with an enterprise’s existing architecture.