Kishan Sundar, a seasoned transformation leader with over 22 years of experience in technology and business strategy across diverse industries globally. He specializes in leading large-scale transformation programs, excelling in technology strategy, execution, and integration during mergers and acquisitions. Kishan is adept at managing teams, articulating enterprise-level technology strategies, and consistently delivering results. With a proven track record and deep expertise in both technology and business domains, he is a valuable pioneer in transformative leadership.

In an exclusive interview with Ciol, Kishan Sundar, spoke about how generative AI is impacting personalized banking experiences, and how are banks addressing security and privacy concerns., he outlines about what Indian banks can learn from their global counterparts.

Here is the detailed interaction:

How does generative AI impact personalized banking experiences, and how are banks addressing security and privacy concerns?

Generative AI is transforming the banking sector by improving customer service and streamlining operations. Banks are increasingly adopting Marketing Technology (MarTech) to offer personalized financial advice and activity-based product recommendations. The use of generative AI in these areas is aimed at being proactive, not just reactive, in customer service.However, these advancements bring new challenges, especially in data privacy. To deliver personalized services, banks often rely on unmasked data, but this approach can compromise customer privacy. On the other hand, masking or obfuscating data undermines the uniqueness needed for tailored services. As a result, banks must strike a balance between personalization and privacy protection.

Another issue is bias and fairness in the training data for AI models. If the training data is too narrow or represents only a fraction of the customer base, it can lead to biased outcomes. To avoid this, banks need to ensure broader and more diverse datasets. Yet, training data is typically more exposed and vulnerable to security breaches than production data, presenting an additional data security risk. Much of the technical and functional work related to generative AI is conducted by third-party partners, which opens new security concerns. This arrangement requires banks to address security gaps and ensure the safety of customer data.To mitigate these risks, banks must implement strong data protection measures such as encryption and strict access controls to prevent unauthorized data breaches. Banks should also maintain transparency in how they use generative AI, providing clear explanations about how customer data is processed, how decisions are made, and how insights are derived.

What changes in banking security result from generative AI, and how are banks adapting?

The primary impact of generative AI on banking security is the heightened risk of data exposure. To effectively utilize marketing technology services, third-party partners require complete access to personal information for accurate customer segmentation and personalized product recommendations. This involves sharing extensive personal data, raising concerns about privacy and security.One key challenge is maintaining effective communication without compromising customer privacy. This often means sharing detailed personal information, potentially exposing a customer’s entire profile. Despite privacy regulations like CCPA and GDPR, their effectiveness can be limited when not strictly enforced, allowing companies to find ways around these rules for their own gain.Banks often use production data to create personalized recommendations and minimize bias, which, while more secure than test data, still poses security risks. To address these challenges, banks are adopting differential privacy techniques. By introducing noise into the data or models, banks can protect individual privacy while maintaining the accuracy of AI-driven recommendations.

Banks are also increasingly embracing federated learning. This approach collects data from various sources, creating a decentralized system that doesn’t rely on a single dataset from one bank. By merging data subsets, banks can reduce the risk of reverse engineering and enhance overall security and privacy.These changes reflect how banks are adapting to the security risks associated with generative AI. Through differential privacy and federated learning, banks aim to protect customer data while still providing personalized services.

How are banks evolving with generative AI, and what can Indian banks learn from their global counterparts?

Banks are leveraging AI to enhance customer service and optimize operations, with key applications in credit scoring, risk management, and fraud detection. Yet, in India, cybercrime is particularly problematic due to two major issues. The first is inadequate security measures despite a high volume of digital transactions, indicating a need for robust real-time fraud detection mechanisms. The second issue is that while Indian banks are proficient in real-time transactions, their fraud prevention strategies are often underdeveloped.To address these issues, Indian banks can learn from global institutions with advanced security protocols. Global banks use sophisticated fraud detection systems that can identify fraudulent activities in real-time, allowing them to flag suspicious transactions and prompt secondary authentication when needed.

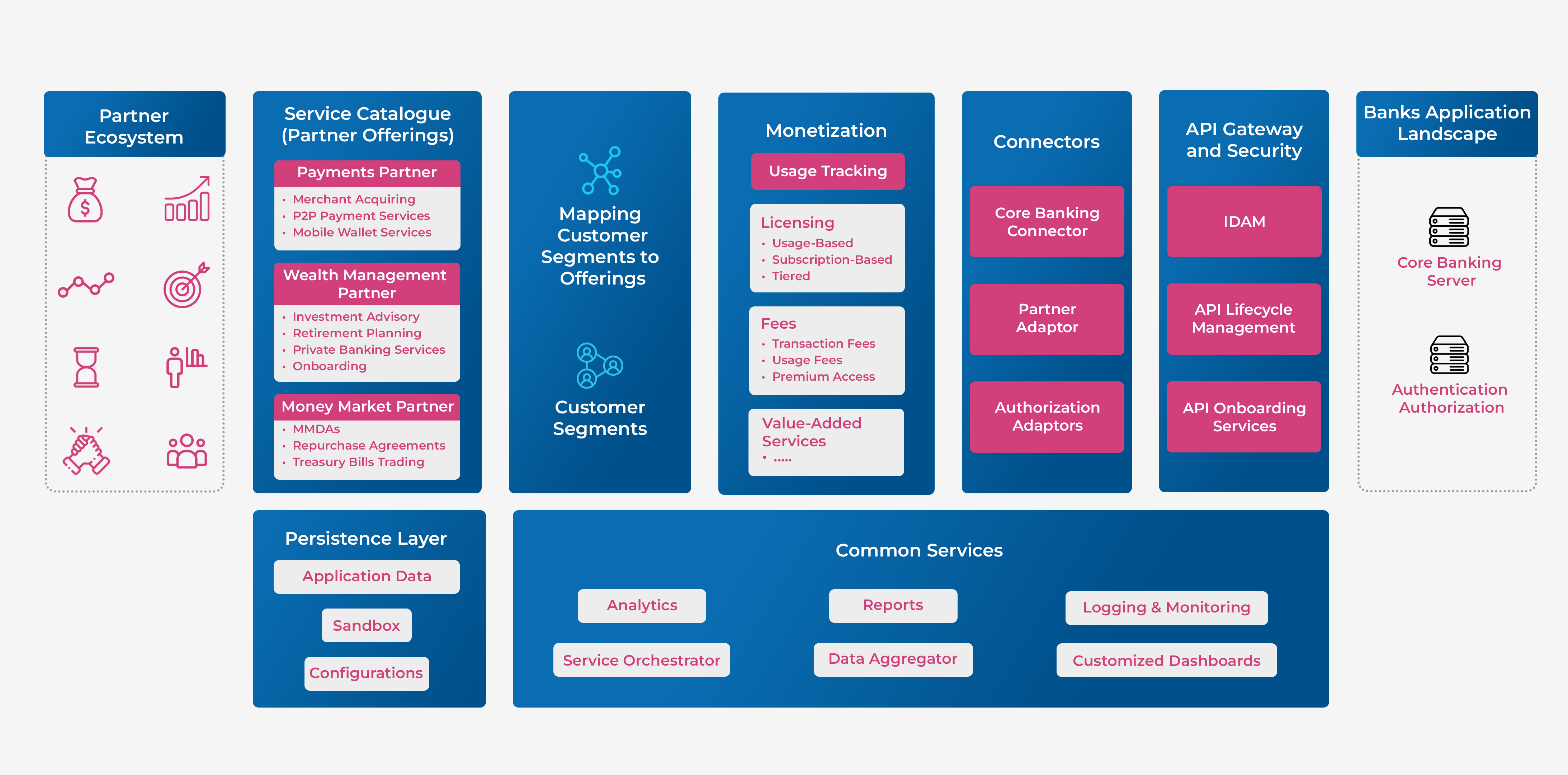

However, Indian banks excel in creating personalized customer experiences compared to their global counterparts. They are highly effective at understanding and catering to the specific needs of their customers. Indian banks employ advanced customer relationship management (CRM) systems, harnessing data analytics and AI to personalize every customer interaction.This focus on personalization has resulted in tailored product recommendations and communication channels that resonate with customers. Indian banks have embraced digital technologies and omnichannel banking, providing a seamless experience across online, mobile, and offline platforms.

By blending innovative customer-centric strategies with advanced digital solutions, Indian banks have set a high standard for customer service. To continue evolving with generative AI, Indian banks can strengthen their security measures by adopting global best practices while maintaining their unique approach to personalized customer engagement.

How do generative AI, data security, and privacy intersect in banking, and how do banks navigate them during digital transformation?

In the early stages of digital transformation, banks focused on creating a multi-channel or omnichannel systemor moving towards branchless banking. Now, as digital transformation evolves, the emphasis is on enhancing the security of these digital channels. The new goal is to shift from a reactive to a predictive approach, focusing on predictive monitoring and anomaly detection to ensure the security and reliability of these evolving digital channels.During the previous wave of digital transformation, banks pushed for unique digital channels and branchless banking, encouraging customers to use mobile apps or web platforms for their transactions. This rapid rollout, however, led to a mix of legacy and new technologies, with varying technology stacks like 2G, 3G, and 4G, creating security challenges.

The current phase involves fortifying these systems to remove security vulnerabilities and ensure robustness. Banks are focusing on making systems more secure and responsive, addressing potential weaknesses before they can be exploited by cybercriminals. Generative AI plays a crucial role in this effort, helping banks identify areas of vulnerability and potential data leakages. This proactive approach allows banks to reinforce their security infrastructure, reducing risks and protecting digital channels from external threats.By applying generative AI, banks can anticipate security risks and build stronger defenses, thereby improving data security and privacy. This intersection of generative AI, data security, and privacy is crucial as banks continue their digital transformation journey. By enhancing system resilience and safeguarding customer information, banks aim to maintain trust and confidence in their digital services.

How does generative AI help banks detect fraud and address concerns about bias in customer interactions?

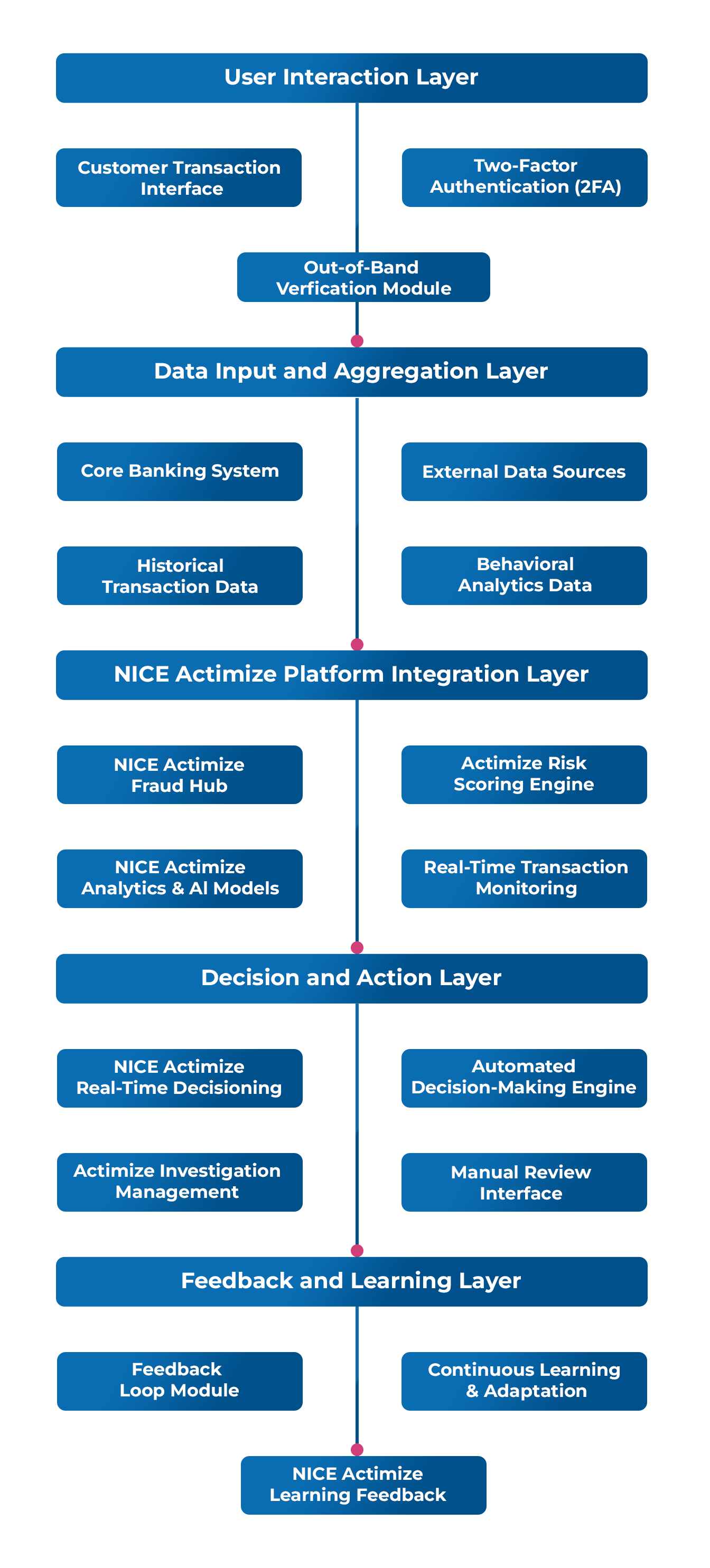

The use of Generative AI can help in building strong defense mechanisms, ensuring that banks are protected against cybersecurity threats. Over the past few years, AI technologies have been integrated into the field of fraud detection, which has brought about significant changes in risk management and fraud detection within financial institutions.

Generative AI plays a crucial role in enabling banks to detect fraud through various techniques, including anomaly detection, behavioural analysis, and risk assessment. Banks are now utilizing behavioural analytics to monitor customer interactions and identify anomalies in real time. By automating the fraud detection process, banks can detect and prevent fraudulent transactions more effectively, ensuring the safety of customer assets and maintaining trust. With the help of AI algorithms, banks can manage risks efficiently and optimize their loan portfolios by accurately assessing creditworthiness and risk profiles.

When discussing bias, it’s crucial to address human bias, particularly that of the programmers who provide the data. This bias can permeate the system at two stages: during data input and when interpreting the output. Generative AI can play a pivotal role in mitigating bias in customer interactions by promoting diversity in training data and leveraging feedback loops. Additionally, there’s a pressing need to democratize data input and make language models open-sourced to ensure inclusivity and equity in serving diverse user populations.

Extracting data involves more than just the input; it also entails how questions are framed, as responses are influenced by this framing. To mitigate bias, it’s essential to make questions bias-neutral or engineering-neutral prompts. Instead of accepting prompts at face value, there’s often a tendency to exploit loopholes. Therefore, refining prompts to elicit desired responses rather than exploiting the initial prompt is essential. The goal is to create prompts that are challenging to manipulate, thus promoting holistic responses. This could involve filtering prompts to ensure neutrality and making them more holistic and less pointed. There should be more focus on making both incoming prompts and outgoing data more cohesive and neutral, thereby reducing the potential for exploitation or bias in AI systems.

What role do regulators play in overseeing generative AI in banking, and are there guidelines in place?

The recent report by the International Data Corporation (IDC),highlights a significant uptick in the adoption of Generative AI (GenAI) across the Asia/Pacific region, with expenditures projected to soar to $26 billion by 2027. Despite India’s prominent stance in embracing artificial intelligence, the absence of specific regulatory laws poses challenges. While ethical guidelines are in place, their lack of legal enforcement creates ambiguity.

In this evolving landscape, regulatory bodies play a pivotal role in overseeing generative AI in banking. Recognizing the potential risks and complexities, regulators are actively engaging with industry stakeholders, experts, and policymakers to establish clear frameworks and guidelines. This proactive approach aims to ensure the responsible and ethical utilization of generative AI, promoting innovation while safeguarding against potential pitfalls in the banking and finance sector.

The General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA) are two significant frameworks that emphasize individual data rights. GDPR, which is implemented in the European Union, requires organizations to obtain explicit consent, provide transparency in data usage, and allow individuals to request the deletion of their data. Similarly, the CCPA in California enables consumers to know about, delete, and control the sale of their personal information. Both regulations emphasize the importance of transparency, user consent, and empowering individuals with control over their data.

When it comes to banks, it’s important to carefully consider how generative AI is used in areas such as fraud detection, customer interactions, and risk management. Protecting consumers is the top priority, and regulatory bodies need to find a balance between promoting innovation, maintaining financial stability, and safeguarding data privacy.

Can you share successful uses of generative AI in banking, and what should banks consider when implementing it?

Since its inception, generative AI has revolutionized the banking landscape, offering transformative solutions in critical areas such as data privacy, fraud detection, and risk management. Its impact has been nothing short of groundbreaking for banks and financial institutions worldwide.

One of the most significant advantages of generative AI lies in its ability to enhance customer experiences. In an industry where customer satisfaction is paramount, generative AI has empowered banks to engage customers positively and effectively. By personalizing interactions and services, banks can create tailored experiences that resonate with customers, fostering loyalty and trust.

Moreover, generative AI has played a pivotal role in automating mundane tasks, freeing up valuable resources, and enabling staff to focus on high-value activities. This automation has not only boosted operational efficiency but has also paved the way for innovation. Banks can now explore new avenues to differentiate themselves in the competitive marketplace, driving revenue growth and reducing operating costs simultaneously.

When implementing generative AI in banking, several considerations are crucial:

1) Regulatory Compliance:

Guidelines governing the use of AI in banking must be followed, particularly in areas such as data protection laws and anti-money laundering regulations. Regulatory requirements must be met throughout the implementation process to ensure compliance and mitigate legal risks.

2) Mitigating Bias:

Measures should be taken to promote fairness and ensure transparency in decision-making processes. By identifying and mitigating biases, banks can uphold ethical standards and prevent discriminatory outcomes in AI-driven operations.

3) Feedback loops:

Banks should establish feedback loops to continuously monitor and evaluate the performance of generative AI systems. By gathering insights from regulatory audits and customer feedback, banks can refine their AI models to align with regulatory requirements and evolving customer preferences. This iterative approach ensures that generative AI systems remain effective and compliant over time.

What future trends in generative AI will shape banking, and how can banks prepare while maintaining trust and transparency?

In today’s AI-driven landscape, banks must stay updated with the latest developments to leverage the potential of AI for growth and innovation. The McKinsey Global Institute (MGI) estimates that across the global banking sector, generative AI could add between $200 billion and $340 billion in value annually, or 2.8 to 4.7 percent of total industry revenues, largely through increased productivity. AI-powered models are transforming banking, creating new possibilities and challenges.

Generative AI presents numerous opportunities for banks to enhance their operations and customer experiences. For instance, in credit scoring and risk assessment, generative AI can analyze non-traditional data sources, such as social media and online behavior, to assess creditworthiness and risk more accurately. This can expand access to credit for deserving populations, promoting financial inclusion.

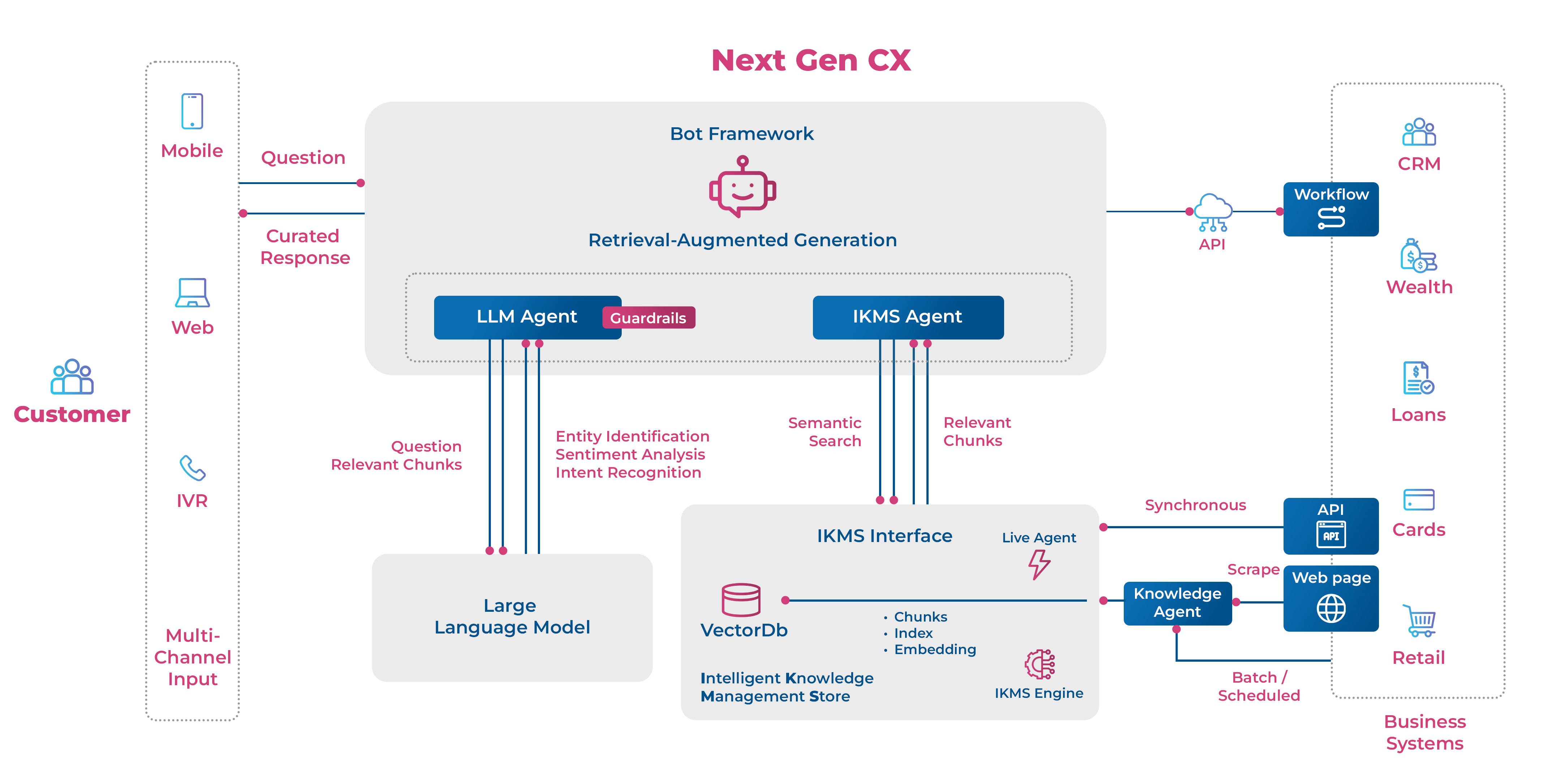

Furthermore, in natural language processing (NLP), banks can utilize virtual assistants and chatbots to automate customer service interactions, resolving issues efficiently and improving the overall customer experience while reducing operational costs. These AI-powered solutions offer 24/7 support and personalized assistance, enhancing customer satisfaction and loyalty.

However, amidst these advancements, banks must prepare for these trends by maintaining trust and transparency. Investing in ethical AI practices is crucial to prevent biases and discriminatory outcomes in AI-powered decision-making processes. Additionally, robust security mechanisms should be implemented to enhance data security and privacy, winning customer trust and compliance with regulatory requirements.

Moreover, banks should ensure that they can explain AI solutions simply and understandably to customers, promoting transparency and accountability. Offering opt-in features gives customers control over their data and AI-powered services, nurturing trust, and confidence in the bank’s practices.

By embracing these principles, banks can navigate the evolving landscape of generative AI while upholding trust and transparency, ultimately driving growth and innovation in the industry.

Follow Kishan Sundar on Linkedin.

Orginally Published in CIOL