The Definitive Framework for Banking Technology Leaders

Trusted AI in banking is not a feature, a compliance posture, or a product category. It is an engineering discipline and the competitive divide that will define the next decade of financial services

The Defining Contradiction in AI-First Banking

Global banks invested more than $85 billion in AI in 2024 – more than any other technology initiative in banking history.

In the same period: 80% of large financial institutions now use AI in core decision-making functions including credit, fraud, and compliance. Model rollbacks, production incidents, regulatory findings, and forced remediation programmes are appearing with increasing frequency across institutions of every tier and every market.

The contradiction is not a paradox. It is the predictable outcome of a decade of investment that prioritised capability over confidence – deployment over validation – and speed over the governance infrastructure that makes speed sustainable.

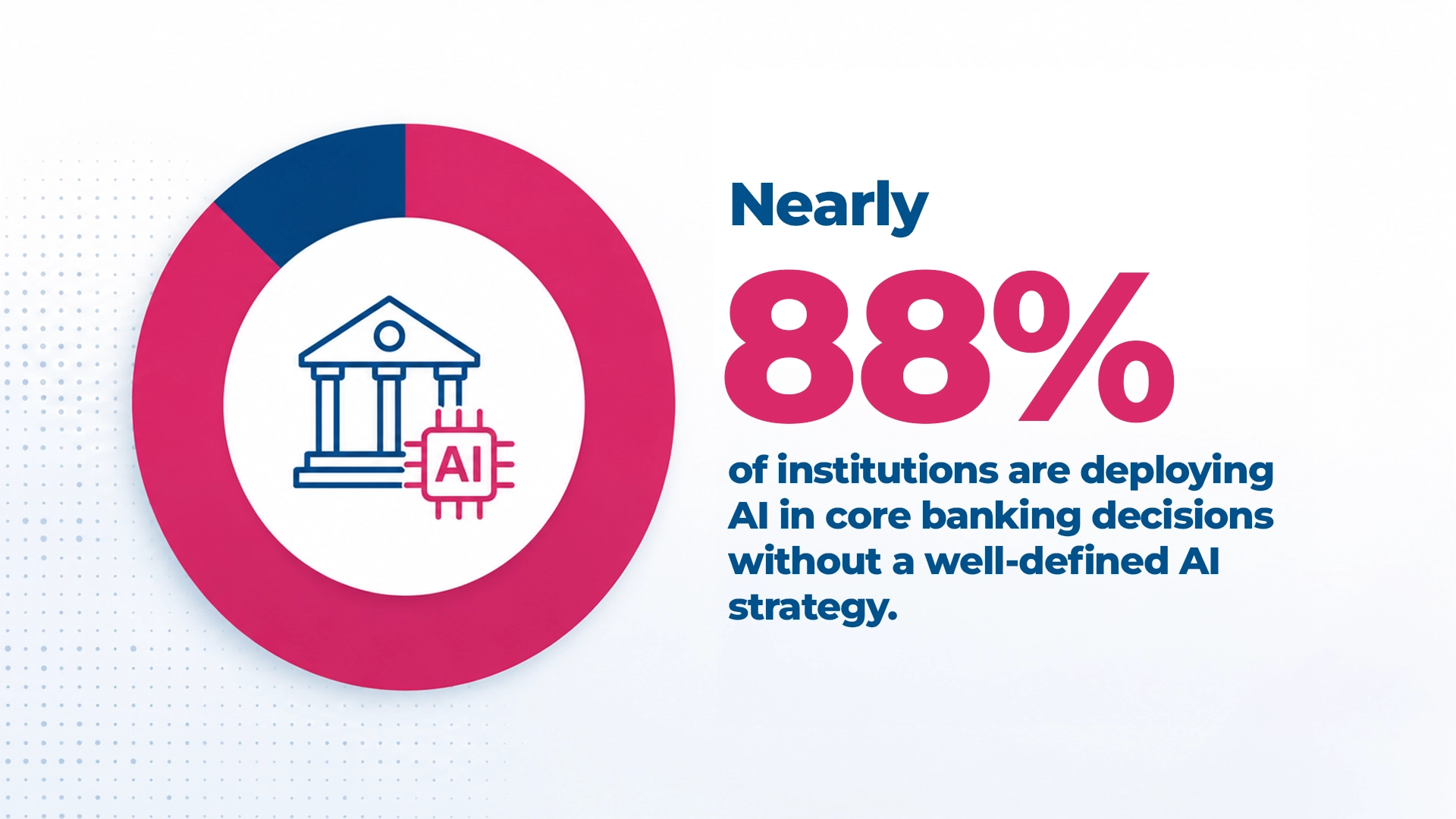

Nearly 88% of institutions are deploying AI in core banking decisions without a well-defined AI strategy or governance framework</strong> adequate to the obligations those decisions carry.

That gap between AI capability and execution confidence is the defining market condition of AI-first banking today. And it is widening at the speed of deployment.

What AI-First Banking Actually Means

AI-first banking is not a deployment milestone. It is a structural shift in how banking systems operate at their core where intelligence is no longer a capability layer added on top of existing processes, but the engine that drives them.

In an AI-first bank:

- Credit decisions emerge from inference models that learn continuously from behaviour, macroeconomic signals, and customer history not from rule engines that fire fixed conditions

- Fraud is intercepted in milliseconds by systems that model behavioural normality and flag statistical deviation in real time

- Compliance is a continuous, automated monitoring layer operating across every transaction and every workflow not a periodic audit function

- Software itself is increasingly built, tested, and deployed with AI embedded in the delivery pipeline

This creates three forms of complexity that did not exist in the previous architecture: outcome unpredictability (probabilistic inference cannot be traced like a deterministic rule), failure propagation (corrupted inputs cascade silently across all dependent decisions), and governance lag (regulatory frameworks built for deterministic systems are being reinterpreted in real time).

Understanding these three forms of complexity is the starting point for understanding what trusted AI in banking actually requires.

Read the full analysis: Banking Has Entered the AI-First Era – what the shift means architecturally, why the new complexity is different in kind, and what it demands of institutions that are serious about operating at scale

Why Adoption Is Not the Same as Trust

The standard AI adoption metrics – models in production, automation rate, use cases activated measure deployment activity. They do not measure reliability.

And reliability is precisely where the gap is widening.

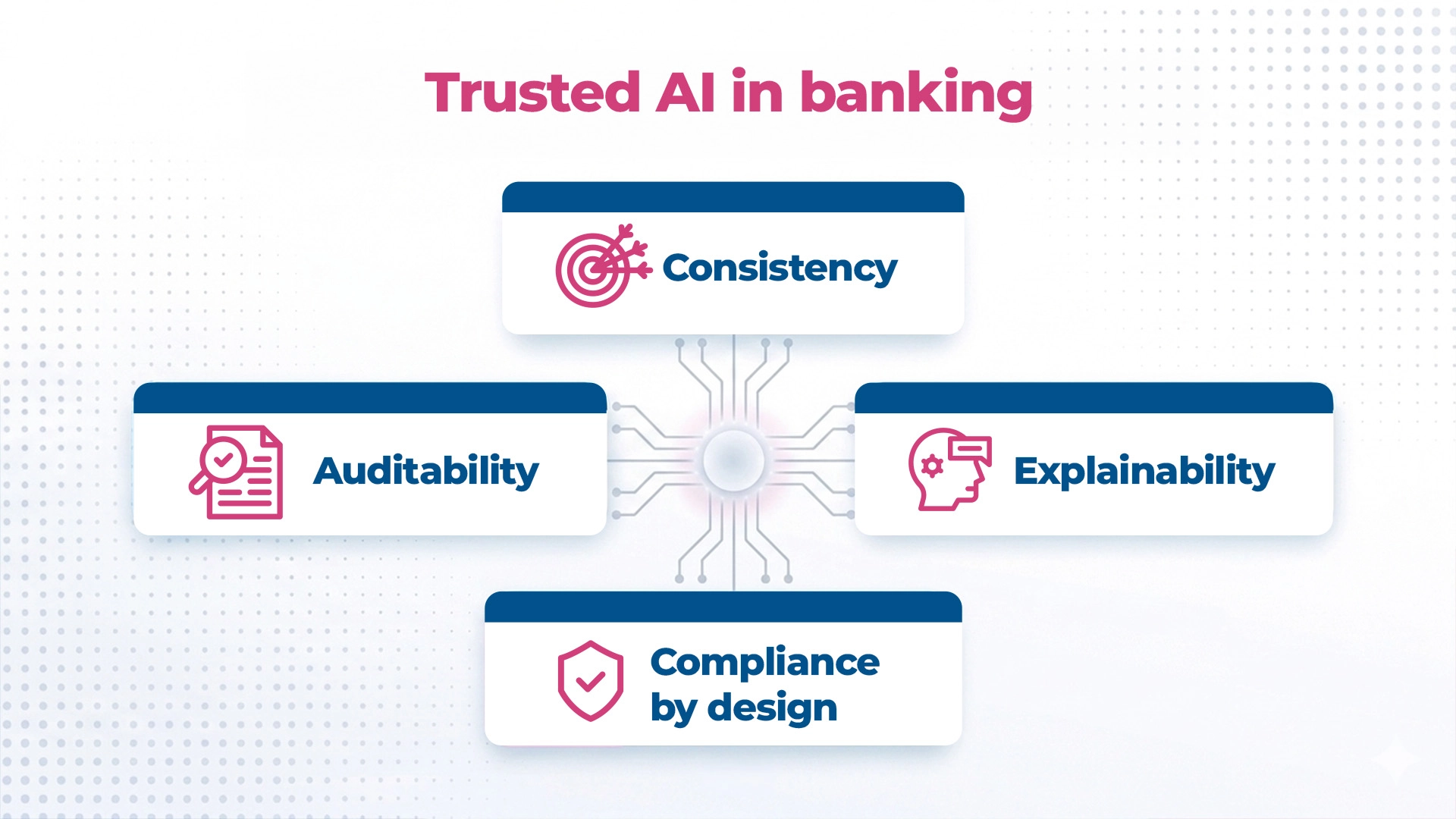

Trusted AI in banking is not a property of a model. It is a property of a system – one that can demonstrate four capabilities simultaneously, under real-world conditions, at production scale:

| Capability | What It Means | Why It Matters |

| Consistency | Same outcome reliability across environments, segments, and time | A model valid at deployment is not valid at month 12 without continuous monitoring |

| Explainability | Decision-level account of specific outcomes, not general model description | CFPB: algorithmic complexity does not exempt adverse action explanation obligations |

| Compliance by design | Regulatory obligations embedded in architecture, not retrofitted | SR 11-7, BCBS 239, EU AI Act apply regardless of when governance was built |

| Auditability | Full lineage from data input through model logic to decision output | Reconstruction on demand — not emergency production for a regulatory examination |

Five structural patterns cause the gap between adoption and trust to persist across institutions of every tier:

- Model performance degrades in production – controlled environments do not replicate production variability

- Data remains fragmented across systems – BCBS 239 non-compliance at over 80% of Tier-1 banks more than a decade after the principles were established

- Explainability is absent by design – built for accuracy, not for the accountability obligation

- Governance frameworks evolve more slowly than deployment – SR 11-7 was not written for continuous delivery pipelines

- Success is measured at go-live, not at month 12 – the KPIs that drive delivery do not capture drift, degradation, or accumulated governance debt

Trust is not an outcome of transformation. It is the prerequisite for scaling it.

Read the full analysis: From AI Adoption to Trusted AI – why the five failure patterns repeat across institutions, what the operational definition of trusted AI requires, and what the mandate shift means for banking technology leaders

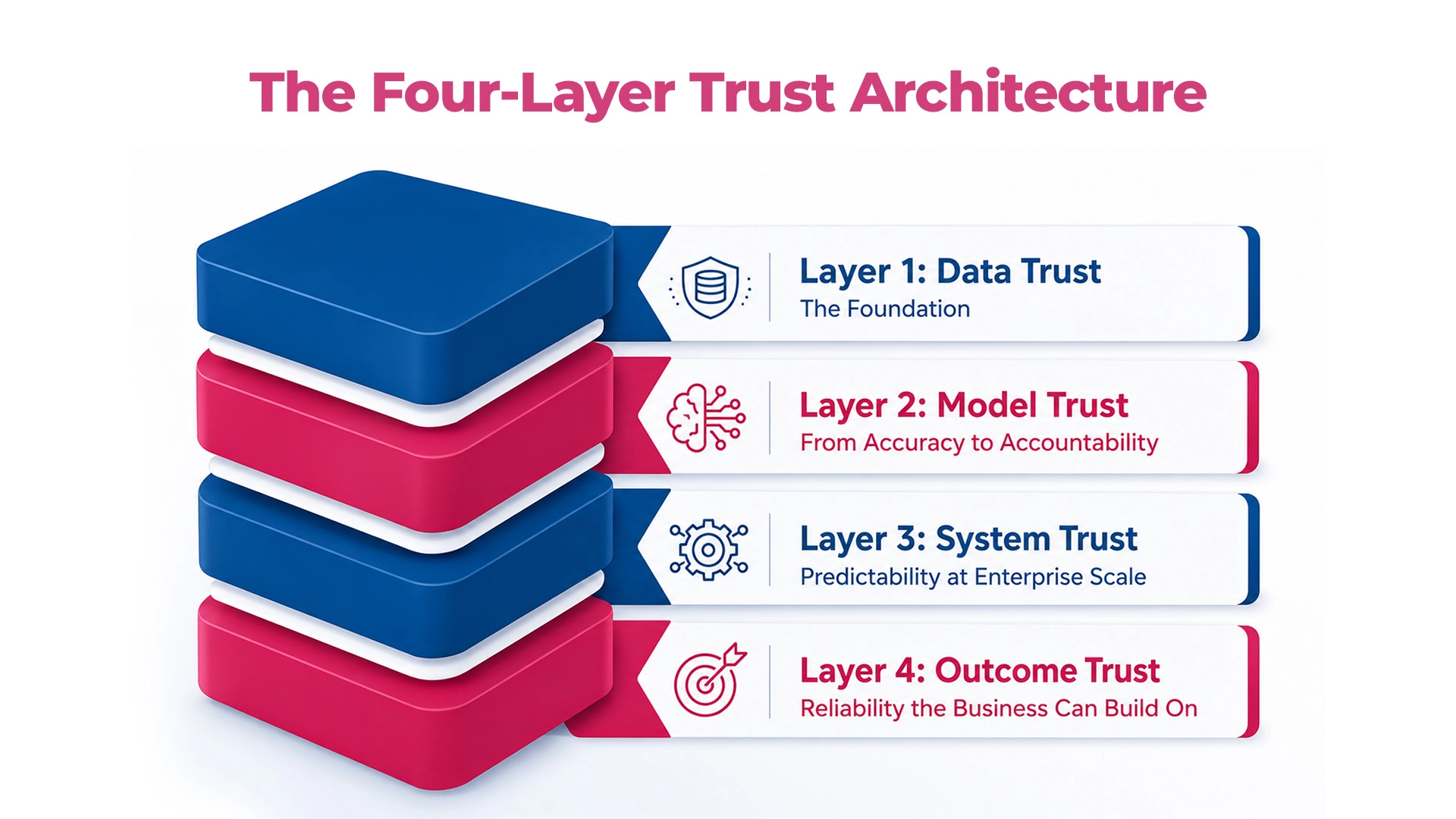

The Four-Layer Trust Architecture

Trust in AI-first banking is an engineering system. It has a specific anatomy – four interconnected layers that must function together, because a failure in any one of them propagates upward through the others.

Layer 1: Data Trust – The Foundation

Properties: Consistency · Lineage · Continuous quality validation · Data contracts

Every AI decision begins with data. A model trained on inconsistently governed data is trained on a version of reality that production will not match. The data contract – a defined, monitored, enforced specification of what every model expects from its inputs – is the mechanism that makes data trust operational rather than aspirational.

Failure mode: Siloed data – model trained on false reality – decisions wrong before inference begins

Layer 2: Model Trust – From Accuracy to Accountability

Properties: Decision-level explainability · Edge case validation · Drift monitoring · Bias detection

Accuracy is necessary. It is not sufficient. A model that performs but cannot explain its decisions is not a trusted model – it is a liability waiting for the right regulatory challenge to surface it.

Failure mode: Opaque models – cannot account for specific decisions – audit failure, adverse action violations, consent orders

Layer 3: System Trust – Predictability at Enterprise Scale

Properties: Behavioural consistency across environments · Integration stability · Always-on validation in CI/CD

Components can each pass their individual validation gates and still combine to produce system-level failures that none of them individually predicted. System trust requires validation at the system level – not just the component level.

Failure mode: Releases without system-level validation – defects propagating across interconnected AI systems – customer-facing failures that are invisible at the component level

Layer 4: Outcome Trust – Reliability the Business Can Build On

Properties: Consistency at scale · Continuous compliance · On-demand auditability · Measurable results

Outcome trust is the proof of concept for the entire architecture. If layers 1–3 function correctly, auditability is a natural output. If any layer has a gap, auditability is the moment that gap becomes visible – to regulators, auditors, and customers simultaneously.

Failure mode: Misalignment across lower layers – inconsistent, unexplainable outcomes – regulatory examination finds the governance the institution believed it had

The cascade runs both directions. When layers fail, failure propagates upward and outward. When layers function correctly, confidence compounds – each validated release builds the institutional evidence base for the next, each enforced data contract reduces downstream risk surface, each explainable decision makes the next regulatory interaction faster and less contested.

“Trust in AI-first banking is the demonstrated, continuous ability of an institution’s AI systems to produce consistent, explainable, compliant, and auditable outcomes – across the full complexity of a live banking enterprise, under regulatory scrutiny, at production scale, and over time.”

The AI Maturity Spectrum – Where Is Your Institution?

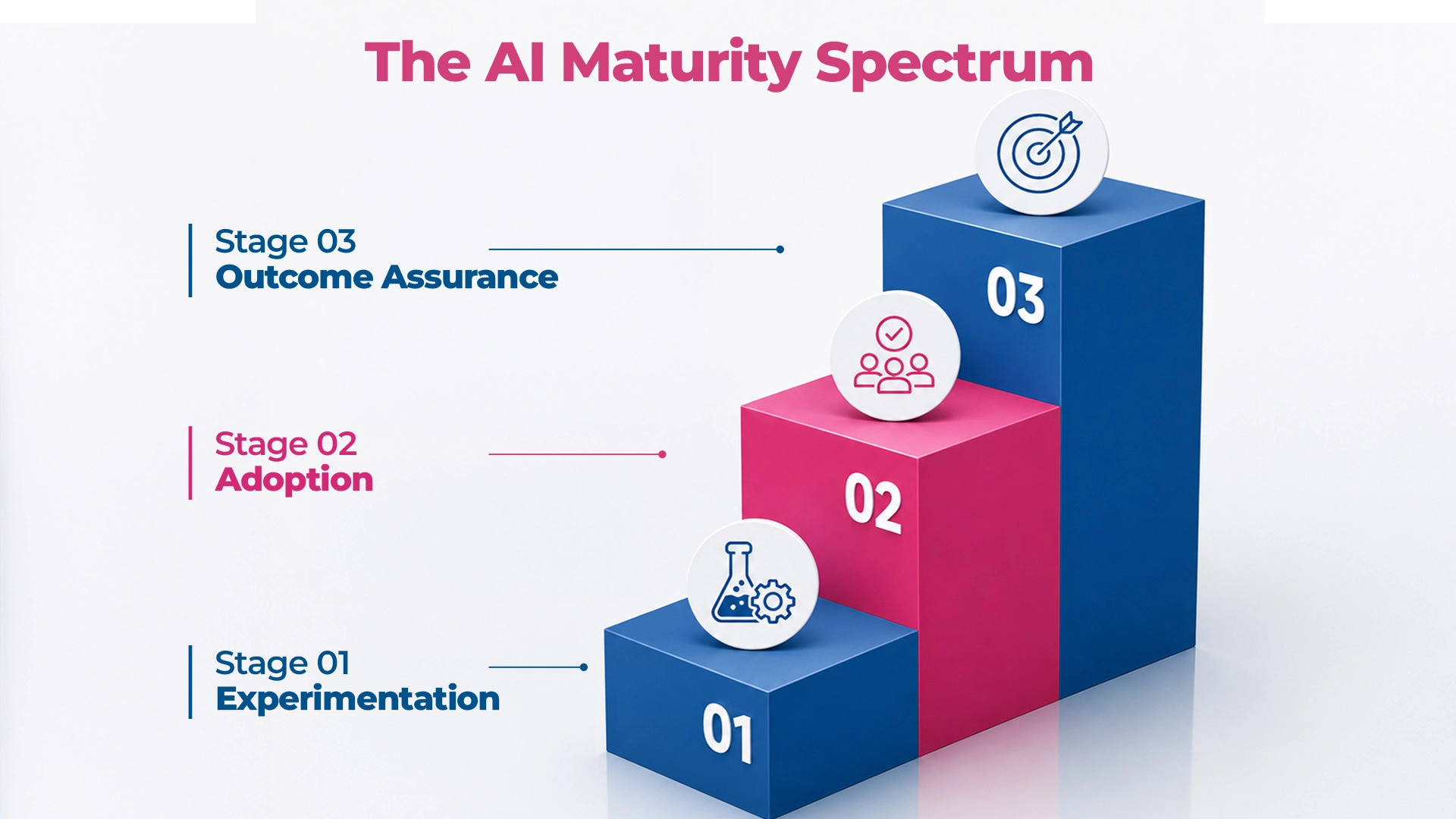

AI maturity in banking is not measured by how much AI an institution has deployed. It is measured by how reliably that AI can be trusted to perform – consistently, under scrutiny, at production scale, over time.

- Stage 1: Experimentation AI works in controlled environments. Pilots succeed. Assumptions about production performance are being built – and are often wrong. The cost of these assumptions is not paid at this stage. It is deferred and compounds. Signal: AI projects succeed in pilots and fail to industrialise.

- Stage 2: Adoption – Most institutions are here AI is in production. Real decisions. Real customers. Real regulatory obligations. But outcomes are inconsistent, unexplainable, or silently degrading – often in ways that periodic review cycles do not catch until the accumulation is material. Signal: Persistent tail of production incidents. Governance gaps discovered post-launch.

- Stage 3: Outcome Assurance AI is trusted infrastructure. Drift detected continuously. Decisions explainable on demand. Compliance monitoring embedded in operations. A regulatory examination is an operational review – not a stress event. Signal: Speed and trust compound together with every release cycle.

The Inflection Point

The transition from Stage 2 to Stage 3 is the most consequential and most consistently underestimated challenge in AI-first banking transformation. It is not a technology challenge. It is an engineering and governance discipline challenge – building the Four-Layer Trust Architecture across an institution that is already operating, already under delivery pressure, and already carrying the debt that Stage 2 accumulates.

Five Diagnostic Questions

Before reading further, answer these honestly – based on what you know about your production AI estate right now, not what a board presentation would say:

- How do you know when a production AI model is drifting?

- If a regulator asked today to explain a specific credit decision from last Tuesday – how long does that take, and what does the answer look like?

- When you release a new model version, what specifically validates it will behave in production the way it behaved in testing?

- What is the governance process for a model that fails a compliance review after it has already been deployed?

- Do your engineering, data, and compliance teams share a single definition of what “production-ready” means for an AI model?

If you cannot answer all five with specificity and confidence, your institution is at Stage 2. The gap between your current state and Stage 3 is the gap this framework is designed to close.

Read The full analysis: From AI Adoption to Outcome Assurance – the three stages examined in depth, what the inflection point actually requires, and how to use the five diagnostic questions to build an honest transition roadmap

The CIO’s Four Imperatives – and the Foundation Beneath All of Them

Every banking CIO navigating the AI-first transition is managing four simultaneous imperatives – each with a genuine business case, each with a specific failure mode when pursued without the right foundation.

- Imperative 1: Customer Experience AI-augmented onboarding hyper-personalisation faster digital product release. The risk: a personalisation engine on inconsistent customer data across channels does not deliver personalised experience. It delivers contradictory experience – and erodes the trust it was supposed to build.

- Imperative 2: Modernised Real-Time Systems AI-native cloud platforms · API-first architecture · real-time semi-autonomous systems for lending, deposits, and payments. The risk: a core that has been modernised for speed without resolving its data governance deficit is not more reliable. It is faster and more fragile – propagating inconsistencies at the speed of real-time operations.

- Imperative 3: Operational Cost Reduction AI-led automation across customer service, lending, deposits, and payments · fraud and regulatory penalty management. The risk: automation that processes decisions at scale on unreliable data does not reduce operational cost. It amplifies operational risk at a volume that makes retrospective correction structurally expensive.

- Imperative 4: Regulatory Compliance and Resilience Predict-prevent-mitigate-recover-restore frameworks · security across all application layers · data governance as the foundation of regulatory defensibility. The risk: compliance AI deployed without governance infrastructure that travels at the speed of the delivery pipeline accumulates the examination findings that the next regulatory cycle will surface.

The pattern across all four: each fails when pursued without continuous validation, governed data, and explainable, auditable decisions. The trust infrastructure is not a fifth imperative. It is the engineering foundation beneath all four – the condition that determines whether any of them delivers what it promises at enterprise scale.

Read The full analysis: The CIO Mandate – From Digital-First to AI-First Banking – each imperative examined in full, with the specific failure mode, the data governance dependency, and the trust infrastructure requirement for each

Generative AI in Banking – A Different Risk Class

Generative AI is not a more capable version of traditional AI. It is a different risk class – and it stress-tests all four layers of the Trust Architecture simultaneously in ways that traditional AI does not.

The core distinction that defines GenAI governance: traditional AI trust is built on validating outputs. GenAI trust requires governing behaviour. You cannot pre-test a system that generates effectively infinite output variations. The trust model must constrain what the system can produce – not sample what it has produced.

Five risk surfaces specific to GenAI in banking:

- Hallucination in customer contexts – fluent, confident, and wrong; FCA Consumer Duty applies regardless of whether the communication was AI-generated

- Explainability deficit – token-by-token probabilistic sampling cannot produce the decision-level explanation that adverse action obligations require

- Data leakage via retrieval layers – RAG architectures surface data by semantic similarity, not by access permission; GDPR Article 22 applies

- Behavioural inconsistency across channels – same model, different system prompts, different versions; systematic fair treatment violations invisible at the individual interaction level

- Shadow AI – compliance teams using consumer LLMs, collections using AI-generated letters; the EU AI Act governs what is in operational use, not what was formally approved

Five banking decisions GenAI must not make as the final decisioning layer: Credit decisions with adverse action obligations · collections communications · AML/SAR escalation decisions · regulatory submissions · regulated financial advice in advice contexts.

Evaluating AI Banking Solutions – The Five Questions That Matter

The AI banking solutions market has a systematic problem: solutions are evaluated on capability – accuracy in curated data, decision throughput, feature specification match. These are the dimensions on which every solution on a serious shortlist performs adequately.

The dimension on which solutions diverge – consistently, consequentially, and invisibly during evaluation – is production reliability: performance under real-world data variability, behaviour when connected systems change, governance under regulatory examination at 12 months.

Four patterns of solution failure in production:

- Built for demonstration, not production – curated data ≠ production variability

- Weak integration with core systems – silent data mismatches propagating through AI decisions

- Drifting into failure – invisible, gradual, accumulating silently until material

- Explainability gaps under examination – global feature importance ≠ decision-level explanation

The evaluation shift: from capability assessment – “what can this solution do?” – to trust qualification – “can this solution be held accountable for what it does, consistently, over time, under regulatory examination?”

Five questions that make this evaluation concrete:

- How does your solution perform when production data differs materially from training data – and name a live deployment where this occurred

- If a connected core system changes its data schema, how does your solution detect that – and what is its behaviour during the detection gap?

- Walk us through – live – how we produce a decision-level explanation for a specific regulated adverse decision made three months ago

- What specifically does your continuous monitoring cover, at what frequency, who receives it, and what governance is triggered at threshold?

- Which decisions that your solution can make should not, in your assessment, be made by AI without human review in a regulated banking environment – and why?

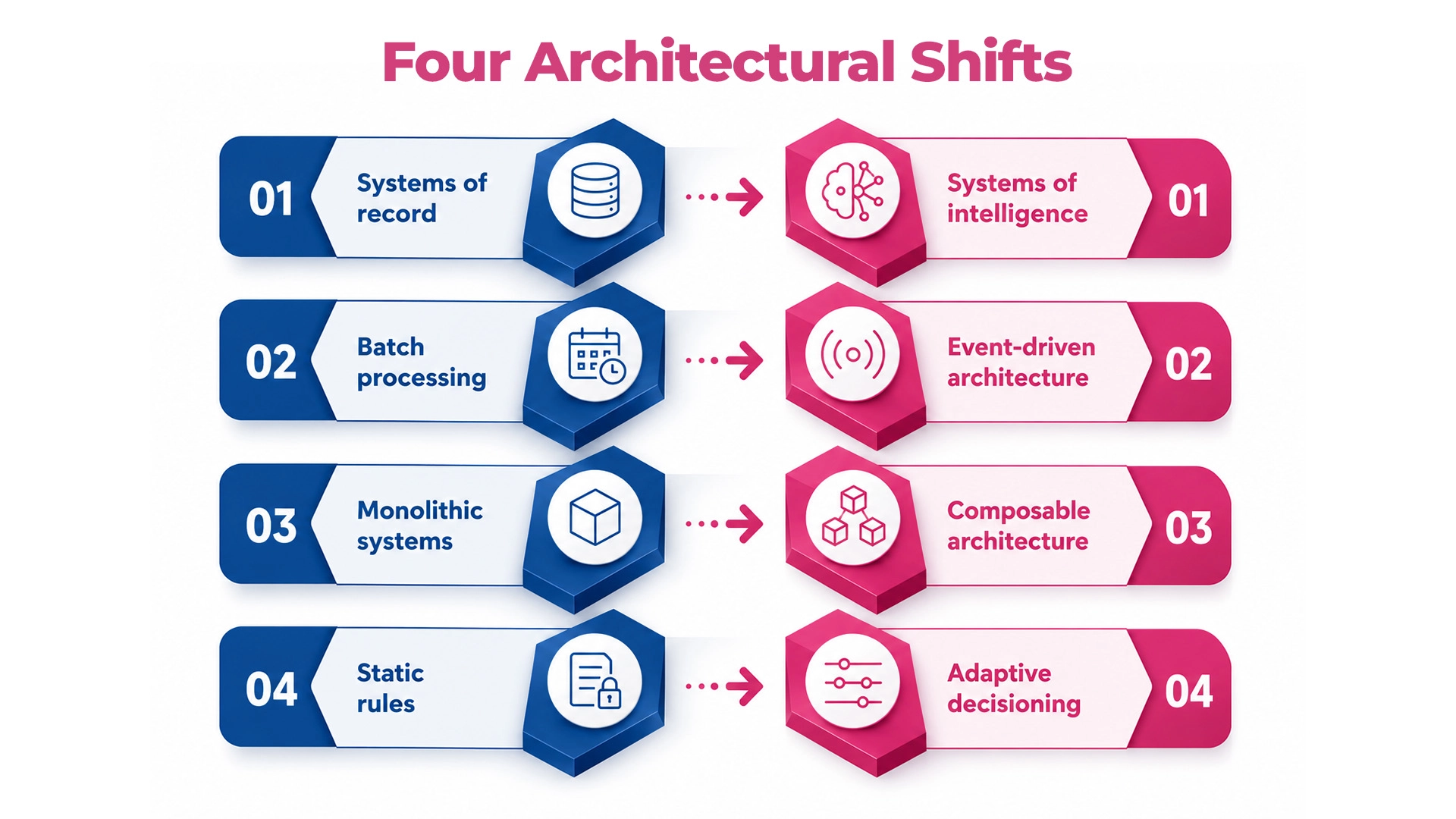

AI-Enabled Core Banking – Re-Architecting for Intelligence

Legacy cores were built for determinism, consistency, and stability. AI-first banking requires something fundamentally different: real-time inference, continuous model integration, event-driven data flows, and probabilistic decisioning – at the speed and scale of live banking operations.

The central insight most modernisation programmes miss: AI does not eliminate the risks that the legacy core was designed to contain. It redistributes and amplifies them – at the speed and scale of real-time operations. The transition period is a period of elevated, not reduced, risk.

Four architectural shifts – and what each demands:

| Shift | From | To | Governance Discipline Required |

| 1 | Systems of record | Systems of intelligence | Data consistency enforcement before intelligence integration |

| 2 | Batch processing | Event-driven architecture | Event integrity and ordering guarantees; dual-write reconciliation |

| 3 | Monolithic systems | Composable architecture | API contract governance; service dependency management |

| 4 | Static rules | Adaptive decisioning | Decision-level audit infrastructure built before adaptive decisioning goes live |

Each shift opens a specific migration risk window – a period during which the new architecture is faster but the governance infrastructure for its failure modes is not yet in place. Institutions that close that window before the AI layer consumes the new architecture are the ones that emerge from modernisation more reliable. Those that do not emerge faster and more fragile.

“The core is no longer just where transactions are processed; it is where trust is continuously executed. Or where, in its absence, the entire AI-first transformation discovers its ceiling.”

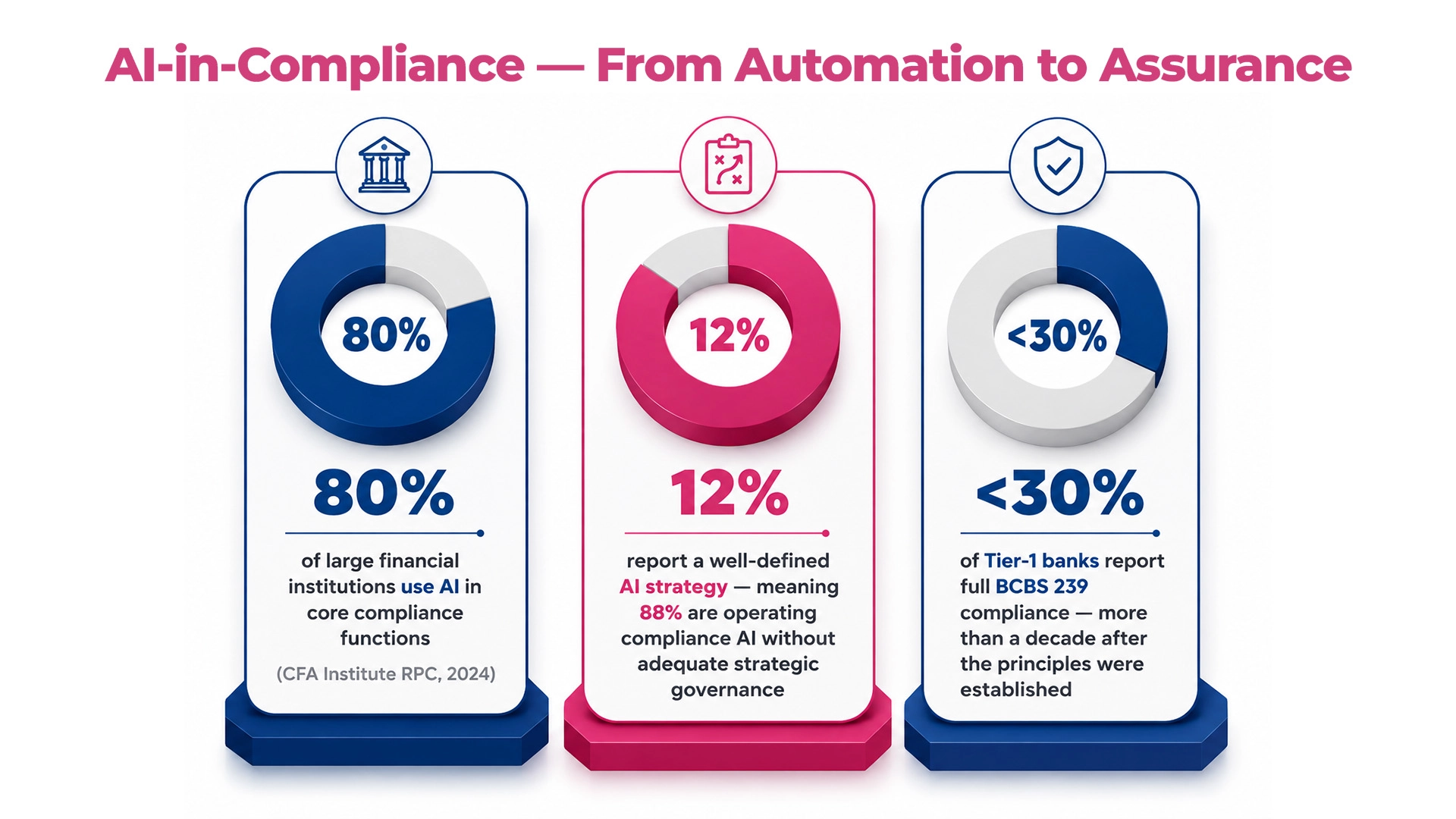

AI-in-Compliance – From Automation to Assurance

Compliance is the proving ground for every trust claim an AI-first bank makes. Every institution that believes it has built trusted AI will eventually be tested here, by regulators, auditors, and the examination standards that are tightening in every major jurisdiction simultaneously.

The scale of the gap:

- 80% of large financial institutions use AI in core compliance functions (CFA Institute RPC, 2024)

- 12% report a well-defined AI strategy – meaning 88% are operating compliance AI without adequate strategic governance

- <30% of Tier-1 banks report full BCBS 239 compliance – more than a decade after the principles were established

The distinction that determines compliance AI outcomes: Automation addresses volume. Assurance addresses accountability. A transaction monitoring system that processes one million transactions per day and cannot explain to a regulator why transaction 847,293 was flagged has solved the volume problem and left the accountability problem entirely unsolved.

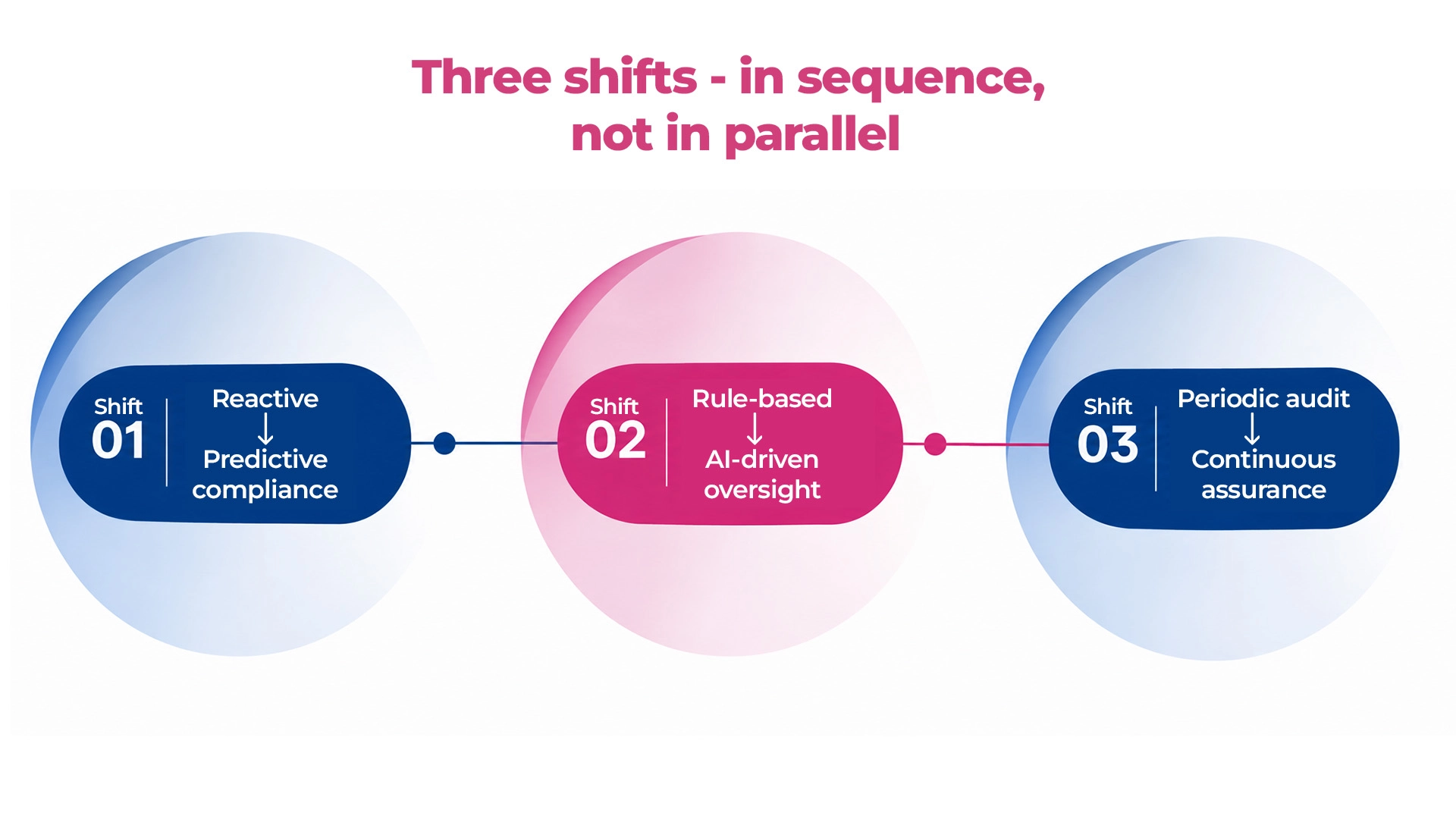

Three shifts – in sequence, not in parallel:

- Shift 1 (first): Reactive – Predictive compliance Monitoring leading indicators before failure occurs. Requires: live compliance performance dashboards tracking model behaviour, data quality, and decision consistency in real time.

- Shift 2 (second): Rule-based – AI-driven oversight Adaptive intelligence detecting patterns rule-based systems cannot represent. Requires: explainability infrastructure at point of model design – SAR narrative quality, not feature importance scores.

- Shift 3 (third): Periodic audit – Continuous assurance Audit-ready as a permanent operational state. Requires: compliance governance operating at the speed of the AI delivery pipeline – daily, not quarterly.

The sequencing is not optional. Continuous assurance without predictive monitoring has no signal to act on. Each shift is the prerequisite for the next.

The Market Gap – The Verdict

Step back from any single institution’s AI transformation programme and look at the banking industry’s relationship with AI as a whole.

80% of large financial institutions are using AI in core decision-making functions. 12% have the governance infrastructure that doing so responsibly requires.

The arithmetic: an industry where 80% of institutions are using AI in core decisions and 12% have adequate governance, is an industry with a trust debt accumulating at the speed of its own deployment. The four structural reasons this gap exists:

- Investment was allocated to capability, not confidence – the business case metrics do not measure governance quality

- Deployment was measured at go-live, not over time – the KPIs that drive delivery do not capture drift, degradation, or accumulated risk

- The regulatory framework was calibrated for the previous technology era – regulators are now closing that gap faster than most institutions’ governance is improving

- Trust was treated as an outcome rather than a system – placed at the end of the process rather than engineered in at the beginning

The competitive bifurcation is already underway.

Institutions building trust infrastructure now are compounding confidence – each validated release faster and more defensible than the last, each regulatory interaction building the relationship capital that makes future AI approvals easier, speed and trust compounding together.

Institutions deferring trust infrastructure are compounding fragility – each new model adding to the unmonitored risk surface, each deployment cycle adding to the governance debt, the ceiling on their transformation ambition approaching at the speed of their own deployment.

“The next phase of AI-first banking will not be defined by who deployed AI first, whose models are most accurate, or who has the most comprehensive strategy. It will be defined by who built the infrastructure to trust their AI – in production, under examination, at enterprise scale, and over time.”

Read the full analysis: The Market Gap – the four structural causes, the four gap deficits, the cost in remediation, regulatory exposure, and competitive ground, and the verdict on what the next five years will be defined by

The Complete Framework – Section by Section

| Section | Core Question It Answers | Read It |

| Banking Has Entered the AI-First Era | What does the structural shift actually mean – and what new complexity does it create? | Blog1 |

| From AI Adoption to Trusted AI | Why do institutions with AI in production still not have AI they can trust? | Blog2 |

| Speed Without Trust Creates Risk | Where and how does AI transformation actually break – with institutional evidence? | Blog3 |

| The CIO Mandate | What does the shift to AI-first demand of the CIO specifically — and what is the load-bearing foundation beneath all four imperatives? | Blog4 |

| What Trust Means in AI-First Banking | What is the precise engineering definition of trust – and what does the Four-Layer Trust Architecture consist of? | Blog5 |

| From Adoption to Outcome Assurance | Where does your institution sit on the AI Maturity Spectrum – and what does crossing the inflection point actually require? | Blog6 |

| Generative AI in Banking | Why is GenAI a different risk class – and what decisions must it never make as the final decisioning layer? | Blog7 |

| AI Banking Solutions | Why does the market systematically produce untrustworthy solutions – and what are the five questions that change the evaluation? | Blog8 |

| AI-Enabled Core Banking | What do the four architectural shifts demand – and what makes the transition period the most dangerous phase of modernisation? | Blog9 |

| AI-in-Compliance | What separates compliance automation from compliance assurance – and what are the three sequenced shifts that close the gap? | Blog10 |

| The Market Gap | What is the market-level verdict on the gap between AI capability and execution confidence – and what does the competitive bifurcation look like? | Blog11 |

Engineering Trust in AI-First Banking: The Definitive Guide

One coherent framework. Full Research Report.

Engineering Trust in AI-First Banking is a comprehensive examination of what trusted AI in banking means architecturally, why most institutions have not yet achieved it, and what it takes to build the infrastructure that makes AI-first transformation reliable at enterprise scale.

Covering the Four-Layer Trust Architecture, the AI Maturity Spectrum, the CIO’s four imperatives, the GenAI governance framework, the AI banking solutions evaluation criteria, core banking modernisation requirements, compliance assurance infrastructure, and a full market-level analysis of the gap between AI capability and execution confidence.

Download the Full Research Report – Engineering Trust in AI-First Banking: The Definitive Guide

FAQ

1. What does “engineering trust” in AI-first banking actually mean?

Engineering trust in AI-first banking means building the infrastructure – validation pipelines, data governance, explainability mechanisms, and integrated oversight – that makes AI systems consistently reliable, auditable, and defensible in production. It is an engineering discipline, not a compliance posture. The distinction matters: trust engineered into the architecture from day one behaves very differently from governance applied after deployment.

2. Why is AI in banking less trusted today despite record investment?

Global banks invested over $85 billion in AI in 2024, yet nearly 88% are deploying AI in core decisions without adequate governance frameworks. The gap exists because investment has been systematically directed at capability and deployment speed, while the validation, data integrity, and explainability infrastructure that makes those capabilities trustworthy at scale has been underfunded. The result is an industry where AI adoption is broad but execution confidence remains low.

3. What is the Four-Layer Trust Architecture and how does it apply to banking?

The Four-Layer Trust Architecture comprises Data Trust, Model Trust, System Trust, and Outcome Trust – four interdependent layers that together ensure AI systems produce consistent, explainable, compliant, and auditable outcomes across a live banking enterprise. Each layer addresses a distinct failure mode: data trust prevents inconsistent inputs, model trust prevents silent degradation, system trust ensures operational reliability, and outcome trust ensures decisions can be explained and defended under regulatory examination.

4. How is AI-first banking different from digital-first banking?

Digital-first banking digitised existing processes – moving channels, transactions, and customer interactions online. AI-first banking is structurally different: intelligence becomes the engine driving core decisions in credit, fraud, compliance, and operations, not a capability layer sitting above existing processes. This changes the accountability standard entirely – from “did the system perform?” to “can the decisions the system made be trusted, explained, and defended?”

5. What are the most common failure modes when banks scale AI without trust infrastructure?

The five most documented failure modes are model drift in production (where accuracy degrades silently over weeks), fragmented data architecture (where inconsistent inputs corrupt AI outputs), explainability absent by design (where decisions cannot be reconstructed for regulatory scrutiny), governance velocity mismatch (where oversight frameworks move too slowly for AI deployment cycles), and deployment KPIs without reliability KPIs (where programmes measure go-lives but not production performance at month 3, 6, or 12).

6. How does Maveric’s approach to engineering trust differ from standard AI governance frameworks?

Standard AI governance frameworks define policies and controls. Maveric’s approach to engineering trust in AI-first banking operationalises those controls as continuous, automated capabilities embedded in the delivery pipeline – continuous quality intelligence through PulseAI, data integrity and compliance readiness through PrismAI. The distinction is the difference between governance as documentation and governance as a live, measurable operational state.