Why This Article Matters

Most conversations about AI in banking start with the wrong question. They ask: ‘Are we using AI?’ The answer is almost universally yes. The question that defines competitive outcomes is different: ‘Can we trust what our AI is doing – consistently, at scale, under regulatory scrutiny?’ This article establishes the structural shift that makes that question the most important one in banking technology leadership today. If you are a CIO, CTO, or senior technology leader navigating AI transformation, this is the frame from which everything else follows.

AI-First Banking Is Not a Deployment Milestone

Banking is not approaching an AI inflection point. It has already passed one.

Across the global financial system, AI has moved well beyond experimentation. It is embedded in credit decisioning, real-time fraud detection, customer onboarding, regulatory compliance, and software delivery pipelines. Global bank investment in AI exceeded $85 billion in 2024. By 2030, that figure is projected to surpass $300 billion.

And yet, in the same period, several of the world’s largest financial institutions disclosed AI-related remediation programmes running into hundreds of millions of dollars. Not because the technology failed in the lab. Because it failed – quietly, consequentially – in production.

That gap between investment and outcome is not a technology problem. It is the defining execution challenge of AI transformation in banking – and it is widening.

What AI-First Actually Means

The term is used loosely. It deserves precision.

AI-first banking does not mean deploying more AI tools or accelerating automation. It describes a structural shift in how banking systems operate at their core – where intelligence is no longer a capability layer added on top of existing processes, but the engine that drives them.

In an AI-first bank:

- Credit decisions are not made by rule engines – they are made by inference models that learn continuously from transaction behaviour, macroeconomic signals, and customer history

- Fraud is not detected after the fact – it is intercepted in milliseconds by systems that model normal behaviour and flag statistical deviation in real time

- Compliance is not a periodic audit function – it is a continuous, automated monitoring layer operating across every transaction and every workflow

- Software itself is increasingly built, tested, and deployed with AI embedded in the delivery pipeline – not just the product

This is not incremental change. It is a re-architecture of how banking operates – and it introduces a category of complexity that traditional systems were never designed to manage.

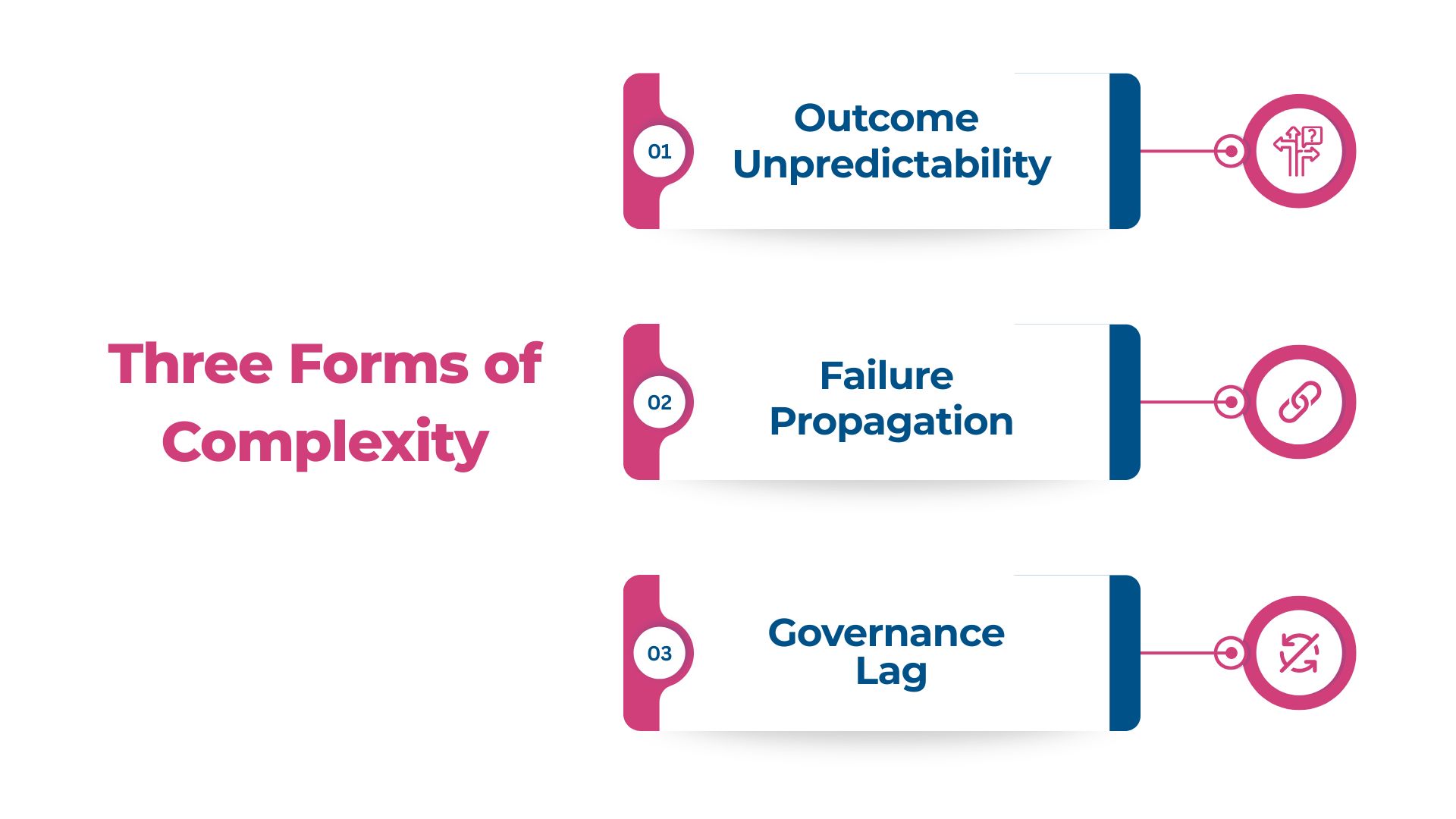

Three Forms of Complexity That Did Not Exist Before

Traditional banking systems were built on deterministic logic: a rule fires, an outcome follows, the system is auditable because every decision can be traced to a defined condition. AI models operate on probabilistic inference – the same input can produce different outputs depending on context, model state, training data, and environmental variables.

This creates three forms of complexity that define the AI-first era:

Outcome Unpredictability

When a credit model makes a decision, the logic is not a rule that can be inspected. It is a pattern embedded across millions of weighted parameters. Explaining that decision – to a customer, a regulator, or an auditor – requires a different kind of infrastructure than traditional systems provide.

Failure Propagation

In a rule-based system, a defect is usually localised. In an AI-driven system, a corrupted data input or a drifting model does not stay contained. It propagates across every decision that depends on it – often silently, over weeks, before it surfaces as a customer complaint, a regulatory inquiry, or a financial loss.

Governance Lag

Regulatory frameworks were built for deterministic systems. The Basel Committee’s model risk management guidance, the CFPB’s adverse action requirements, the EU AI Act – all are being reinterpreted for a world where models learn, drift, and behave differently across environments. Most banks’ internal governance frameworks are lagging even further behind.

The Tension That Defines This Era

Every CIO and CTO leading an AI-first transformation programme is navigating a version of the same fundamental tension.

On one side: the imperative to move fast. Competitive pressure from digital-native challengers, shareholder expectations around cost reduction, customer demands for real-time experiences, and board-level mandates to deploy AI across the enterprise – all push toward acceleration.

On the other side: the obligation to maintain control. Regulatory scrutiny is intensifying. The consequences of an AI system failure in banking – a biased lending decision, a fraud model generating false positives at scale, a compliance workflow that cannot withstand audit – are not just technical problems. They are revenue events, regulatory events, and brand events simultaneously.

The question that defines AI-first banking leadership is not ‘How fast can we deploy AI?’ It is: ‘How do we move fast enough to compete – while building systems that are reliable, explainable, and defensible under real-world conditions?’

The Shift That Actually Matters

The institutions that have deployed AI successfully at enterprise scale – in production, across millions of decisions, under regulatory scrutiny – have made a specific shift.

They have moved from asking ‘What can our AI do?’ to asking ‘Can our AI be trusted to do it – consistently, repeatedly, and under scrutiny?’

This is the shift from AI adoption to trusted AI in banking. It is not a philosophical distinction. It has a specific engineering meaning:

- Systems must produce consistent outcomes across environments, data conditions, and edge cases – not just in controlled testing

- Decisions must be explainable at the individual decision level – not just ‘we can describe the model,’ but ‘here is exactly why this specific outcome occurred’

- Failures must be containable – with validation continuous and governance embedded in the delivery pipeline

- The entire system must be auditable – with lineage, traceability, and accountability at every layer

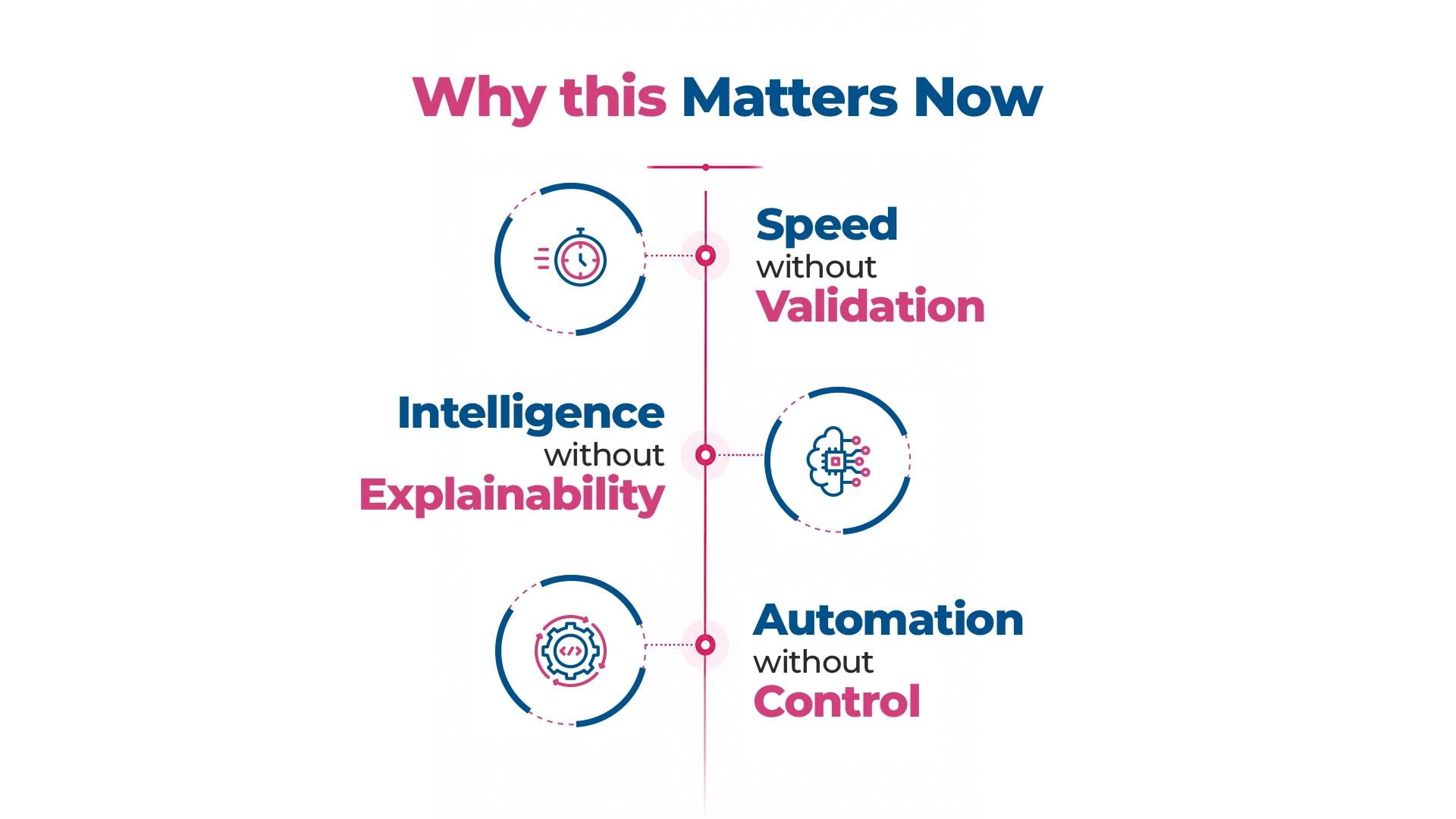

Why This Matters Now

A fraud detection model that drifts is not a technical incident. It is a customer trust incident, a regulatory incident, and a financial incident in the same moment. A credit model that cannot explain its adverse decisions is not just an engineering problem – it is a legal exposure, a fair lending risk, and a board-level governance failure simultaneously.

The institutions that understand this are not slowing down. They are building trust into the system as they accelerate – so that speed and control are not in opposition, but compounding.

In AI-first banking, three realities define the competitive landscape:

- Speed without validation: does not create advantage – it creates accumulated risk that compounds with every release cycle

- Intelligence without explainability: does not create trust – it creates liability that grows with every model deployed without defensible decision logic

- Automation without control: does not create efficiency – it creates fragility that fractures under real-world complexity

The success of AI-first banking transformation will not be measured by how many AI systems are deployed. It will be measured by how many of those systems can be trusted – in production, under pressure, at scale.

This article is part of the Engineering Trust in AI-First Banking series, examining the framework that separates institutions that scale AI from those that stall.

Read the Full Framework: Engineering Trust in AI-First Banking

Download the Full Research Report: Engineering Trust in AI-First Banking: The Definitive Guide

What to Read Next

NEXT IN THIS SERIES

The Real Shift: From AI Adoption to Trusted AI – why deployment is not the destination, what the five structural failure patterns are, and what the operational definition of trusted AI requires

FAQ

1. What does the AI-first era mean specifically for banks and financial institutions?

The AI-first era means AI is no longer a capability layer added to existing banking processes – it is the engine driving core decisions in credit, fraud, compliance, and customer engagement. Banks have crossed the inflection point: the question is no longer whether to adopt AI, but whether the AI already in production can be trusted to perform consistently, explainably, and at scale.

2. Why is banking particularly complex terrain for AI deployment compared to other industries?

Banking combines real-time decisioning requirements, strict regulatory obligations across multiple jurisdictions, legacy technology infrastructure built for deterministic rule-based systems, and high-stakes outcomes – credit decisions, fraud determinations, compliance assessments – where errors carry direct customer and regulatory consequences. These four factors compound in ways that make AI deployment in banking structurally more demanding than in most other sectors.

3. What new risks does AI-first banking introduce that digital-first banking did not?

AI-first banking introduces probabilistic risk – where systems exercise judgment rather than execute rules – and that requires fundamentally different governance. Specific new risk surfaces include model drift (where production accuracy degrades over time), data dependency risk (where inconsistent inputs corrupt outputs at scale), and explainability obligations (where regulators require institutions to reconstruct and justify AI-driven decisions). None of these existed in rule-based digital systems.

4. How has the CIO’s accountability changed in the transition to AI-first banking?

In a digital-first bank, the CIO’s primary accountability was delivery: build systems that function and maintain operational stability. In an AI-first bank, the CIO is accountable for whether the decisions those systems make are reliable, explainable, and defensible – a governance accountability for machine-made decisions at enterprise scale. That is a fundamentally different standard, and it sits with the CIO regardless of whether the current governance framework is designed to carry it.

5. Is AI-first banking a realistic goal for regional and mid-tier banks, or only for global institutions?

AI-first banking is achievable at any scale, but the path is different. Mid-tier and regional banks typically have less legacy complexity but also less internal AI capability. What makes the transition feasible is a modular approach – building trust infrastructure incrementally around specific high-value use cases rather than attempting enterprise-wide transformation. The institutions that succeed at this level tend to partner with technology firms with deep banking domain expertise rather than attempting to build AI capabilities from scratch.