Why This Article Matters

This is the synthesis article – the one that steps back from individual institutional failure patterns and examines what the banking industry’s relationship with AI looks like as a whole, from the outside. The 80%/12% gap is not a statistic. It is a market-level indictment that every CIO and board should sit with. This article names the four structural reasons the market produced this outcome, quantifies what the gap costs across remediation, regulatory exposure, and competitive position, and delivers the competitive verdict that will define the next five years of AI-first banking. If you read only one article in this series before deciding whether to download the full research report, make it this one.

The Defining Contradiction

Step back from any single institution’s AI transformation programme and look at the banking industry’s relationship with AI as a whole.

Global banks invested more than $85 billion in AI in 2024 – more than any other technology initiative in banking history. Every major institution has an AI strategy. Every major institution has AI in production.

And yet, by the measures that matter most in a regulated industry – consistency of outcomes, defensibility of decisions, reliability under examination, confidence in scale – AI in banking is, across the industry as a whole, less trusted today than the rule-based systems it is replacing.

This is not a paradox. It is the predictable outcome of a decade of investment decisions that prioritised capability over confidence, deployment over validation, and speed over the governance infrastructure that makes speed sustainable.

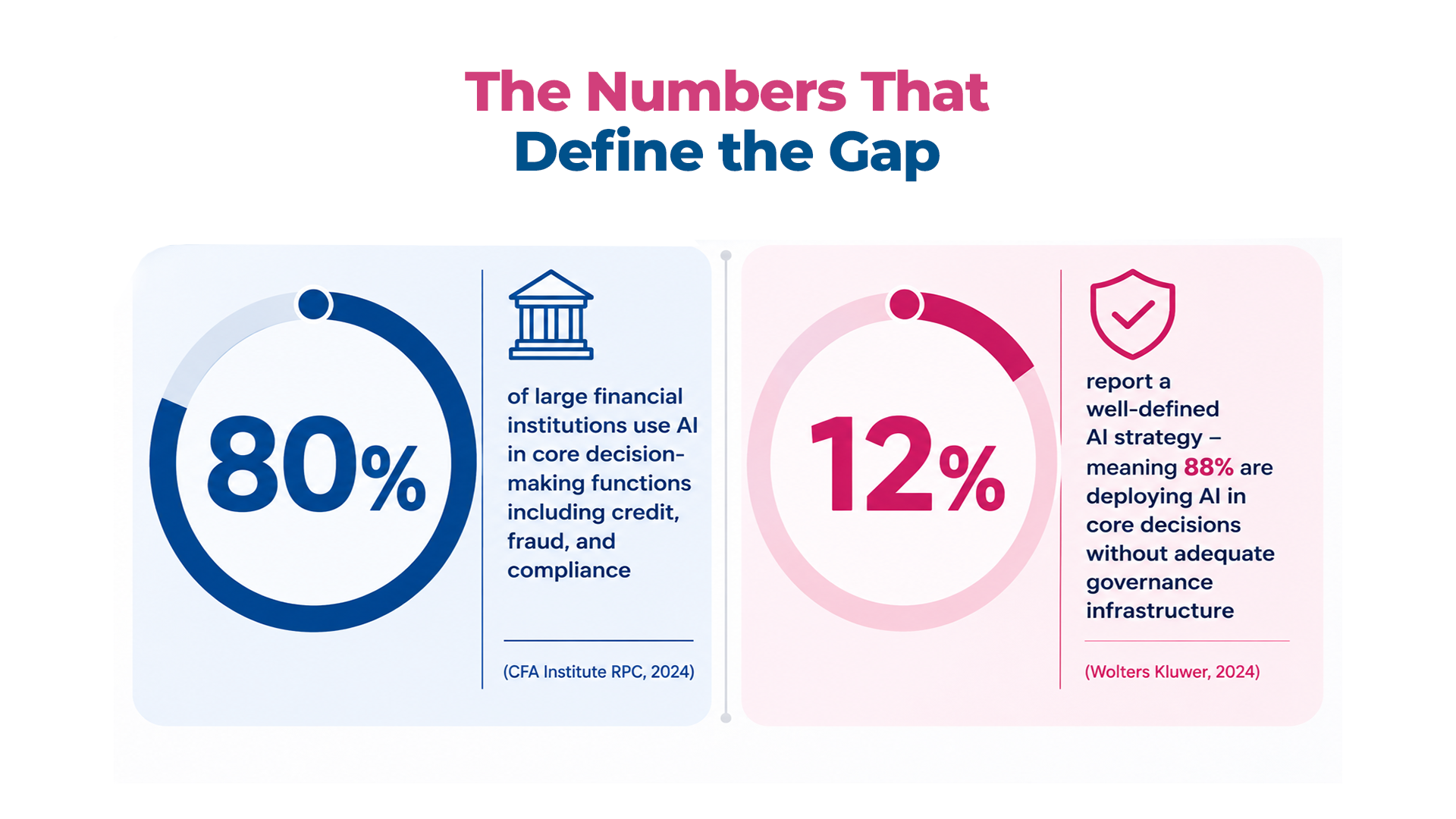

The Numbers That Define the Gap

80% of large financial institutions use AI in core decision-making functions including credit, fraud, and compliance

80% of large financial institutions use AI in core decision-making functions including credit, fraud, and compliance

12% report a well-defined AI strategy – meaning 88% are deploying AI in core decisions without adequate governance infrastructure

The arithmetic: an industry where 80% of institutions are using AI in core decisions and 12% have the governance infrastructure that doing so responsibly requires is an industry with a trust debt accumulating at the speed of its own deployment.

Research published in late 2025 found that most companies deploying AI have experienced risk-related financial losses from compliance failures, bias in automated decisions, and flawed model outputs. In banking, where compliance failures carry direct regulatory penalties, bias in credit decisions triggers enforcement action, and flawed model outputs in fraud systems generate measurable financial loss, these are institutional risk events – not operational costs.

Four Structural Reasons the Market Produced This Outcome

- Investment was allocated to capability, not confidence: – the business case for AI is built around capability metrics: fraud detection accuracy, credit decision throughput, automation rate. None of these measure governance quality, production reliability, or regulatory defensibility. Investment followed the measurement framework.

- Deployment was measured at go-live, not over time: – AI transformation programmes are managed against deployment milestones. What happens to models after they go live – whether they drift, whether governance frameworks travel at delivery speed – is not captured in the KPIs that determine whether a programme is succeeding.

- The regulatory framework was calibrated for the previous technology era: – SR 11-7, BCBS 239, evolving CFPB guidance – were written for a world where models were static artefacts deployed infrequently. They were not written for continuous delivery pipelines and systems that adapt to new data continuously. Regulators are now closing that gap faster than most institutions’ governance maturity is improving.

- Trust was consistently treated as an outcome rather than a system: – built at the end of the process rather than the beginning. This deferred trust infrastructure investment until after deployment – meaning by the time governance gaps became visible, they were already consequential.

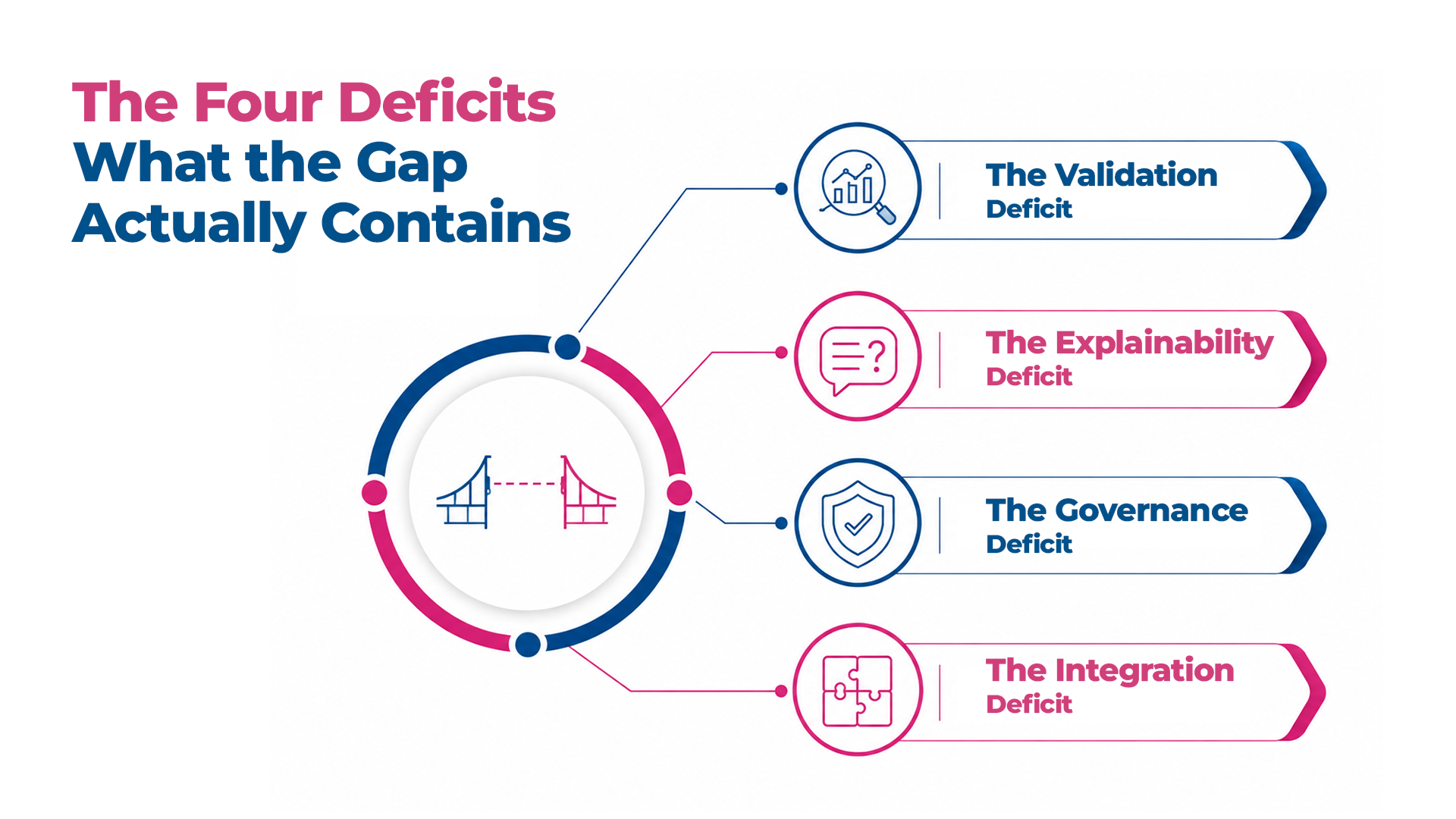

The Four Deficits – What the Gap Actually Contains

The gap between AI capability and execution confidence is not a single failure. It is four specific deficits that reinforce each other and cannot be resolved independently.

- The Validation Deficit: – AI that was valid at deployment is not AI that is valid today. Without continuous monitoring, the gap between those two states is invisible until it surfaces as an incident. By then it has accumulated across thousands of decisions.

- The Explainability Deficit: – the proportion of AI decisions deployable in regulated contexts that can be explained at the individual decision level is significantly lower than the proportion legally required to be. Explainability was not built because it was not measured.

- The Governance Deficit: – SR 11-7-style point-in-time validation cannot govern a continuous delivery pipeline. The governance framework is calibrated for the previous technology era and is accumulating a shadow zone of unreviewed AI operation with every deployment cycle.

- The Integration Deficit: – individually capable components combining to produce system-level failures that no single team fully understands, because no team owns the aggregate behaviour of the AI estate as a whole.

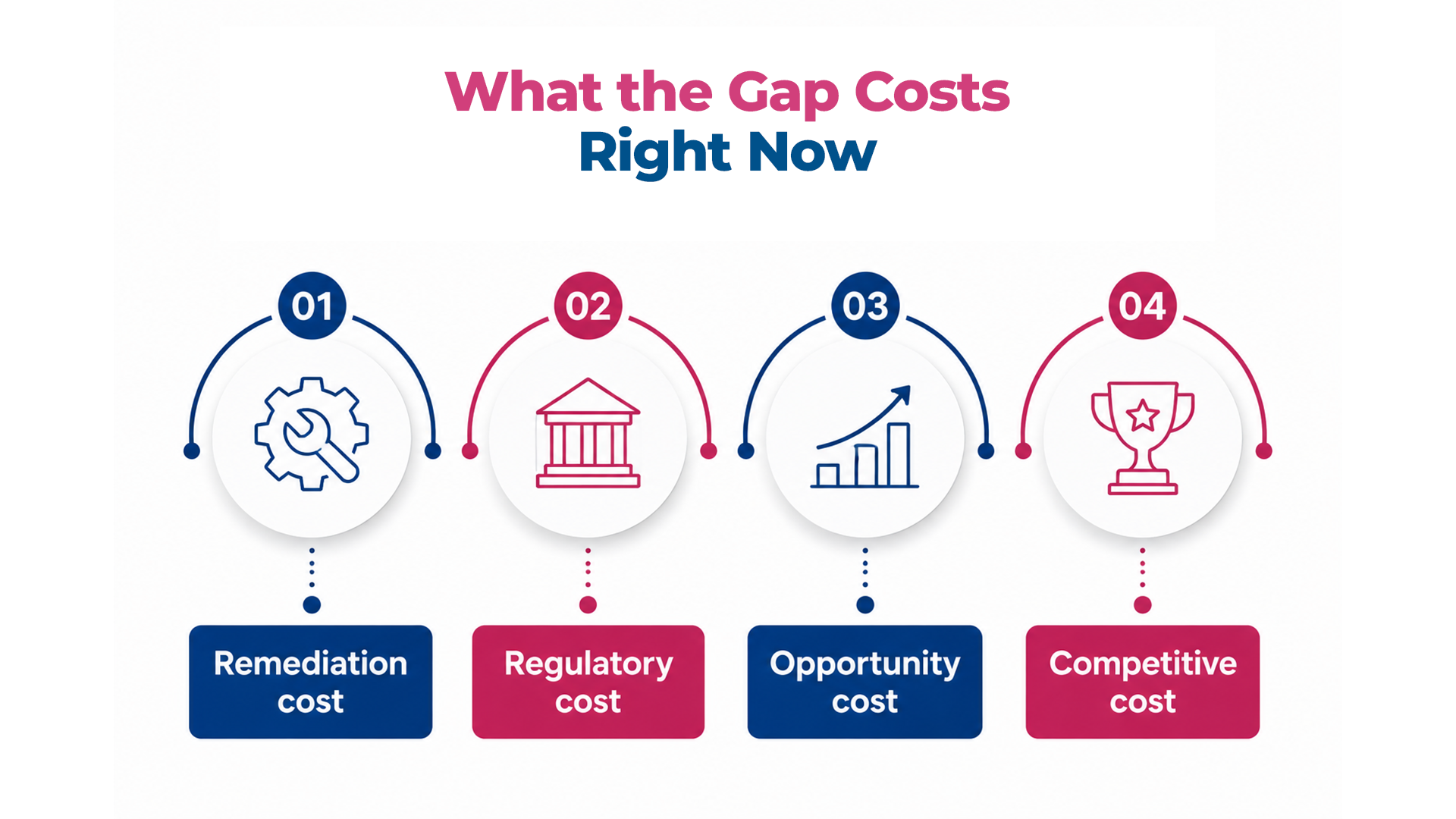

What the Gap Costs – Right Now

The cost is not a future exposure. It is a current liability accumulating across four measurable dimensions.

- Remediation cost: – the cost of discovering and correcting AI failures after production propagation is consistently higher than the cost of the validation and governance infrastructure that would have prevented them. The £12 million remediation for a six-week loan pricing defect is one data point in a pattern that repeats at varying scales across the industry.

- Regulatory cost: – the financial cost of a single significant regulatory finding – in direct penalties, remediation programme cost, management time, and operational disruption – typically exceeds years of investment in the governance infrastructure that would have prevented it.

- Opportunity cost: – institutions without confidence infrastructure are not accumulating the compounding trust advantage that institutions building it are. Each clean regulatory interaction, each validated release, each enforced data contract builds institutional capability that becomes a competitive advantage.

- Competitive cost: – the gap is bifurcating the competitive landscape in ways that are not yet fully visible but that the next three to five years will make unmistakable.

The Competitive Bifurcation

The institutions building trust infrastructure are compounding confidence. Each validated release is faster and more defensible than the last. Each regulatory interaction builds relationship capital. Speed and trust compound together – the delivery pipeline accelerates because the confidence infrastructure beneath it makes acceleration safe.

The institutions deferring trust infrastructure are compounding fragility. Each new model adds to the unmonitored risk surface. Each deployment cycle adds to governance debt. The ceiling on transformation ambition is approaching at the speed of their own deployment.

The next phase of AI-first banking will not be defined by who deployed AI first, whose models are most accurate, or who has the most comprehensive strategy. It will be defined by who built the infrastructure to trust their AI – in production, under examination, at enterprise scale, and over time. The institutions that make that decision now are not just managing risk. They are building the only foundation on which AI-first banking at genuine enterprise scale is possible. The institutions that defer it are not just accepting risk. They are ceding the ground to those who did not.

This is the final article in the Engineering Trust in AI-First Banking series. The complete framework – all eleven sections, 36,000 words, the Four-Layer Trust Architecture applied across every dimension of AI-first banking – is available as a downloadable research report.

Download the Full Research Report: Engineering Trust in AI-First Banking: The Definitive Guide

Complete the Series

START FROM THE BEGINNING: Banking Has Entered the AI-First Era – Article 1 of 11

FAQ

1. What does the 80%/12% gap in AI banking deployment reveal about the state of the industry?

The gap where 80% of large financial institutions use AI in core decisions but only 12% report a well-defined AI strategy is not a paradox. It reveals that the banking industry has systematically invested in AI capability while underfunding the governance infrastructure that makes capability trustworthy at scale. The result is an industry with broad AI adoption and narrow execution confidence — and trust debt accumulating at the speed of deployment.

2. Why is the gap between AI capability and execution confidence described as a competitive bifurcation?

Because institutions on opposite sides of the gap are building structurally different competitive positions. Institutions with strong trust infrastructure can scale AI confidently, deploy faster with genuine assurance, and demonstrate governance maturity to regulators. Institutions without it face a ceiling: their AI programmes plateau at the point where trusted scale would be required, and remediation costs grow as governance debt compounds. The gap is not static it is widening at the speed of deployment, and the institutions on the wrong side of it are losing ground on a compounding basis.

3. What are the four structural market forces that produced the AI capability vs. execution confidence gap?

The four forces are: investment allocated to capability and deployment speed, not validation and governance infrastructure; success measured at go-live, not production reliability over time; regulatory frameworks calibrated for the previous technology era, leaving governance requirements ambiguous until enforcement actions clarified them; and trust treated as an outcome of transformation rather than a system that must be engineered and maintained. Each force individually is addressable; together they produced the structural market condition that defines AI-first banking today.

4. How significant is the financial cost of the trust debt that AI programmes are accumulating?

Research published in late 2025 found that most institutions deploying AI had experienced risk-related financial losses from compliance failures, bias in automated decisions, and flawed model outputs. In banking specifically, these are not operational costs they are regulatory penalties, customer remediation events, and forced model rollbacks that carry direct financial consequences. The cumulative cost of unaddressed trust debt compounds over time: institutions that defer the investment in governance infrastructure consistently report that the eventual remediation cost exceeds the original governance investment by a significant multiple.

5. What does winning the next decade of AI-first banking actually require?

It requires building the trust infrastructure that makes AI reliable in production, under examination, at enterprise scale, and over time not just capable in demonstration. The institutions that will define the next decade are not those that deployed AI earliest; they are those that built the data governance, continuous validation, explainability, and integrated oversight architecture that allows AI to perform at the standard banking requires. The competitive divide is between institutions that treat trust as a system and those that treat it as an outcome and that divide is now measurable in regulatory findings, production incident rates, and the widening gap between AI adoption and AI confidence.

6. Is there a point at which the trust debt is too large to close without a full programme reset?

Yes. Institutions that have deployed multiple AI models without monitoring infrastructure, across data environments without governance coverage, in compliance functions without explainability architecture, face a remediation challenge that is significantly more complex than building correctly from the start. The practical threshold is approximately three to four years of compound deployment without governance investment at that point, the surface area of unmonitored risk, the interdependencies between models, and the data lineage gaps typically require a structured remediation programme rather than incremental fixes. This is why the decision to build trust infrastructure early is not optional it is the difference between a manageable investment and an existential remediation.