An AI implementation framework for banking CIOs is a structured approach for integrating artificial intelligence into core operations to manage risk, ensure scalability, and establish enterprise-wide capabilities. A successful framework is built on five essential pillars that provide a repeatable model for deploying AI across the organization.

1) Strategic Alignment:

Ensuring all AI initiatives directly support measurable business objectives.

2) Data Governance:

Establishing the infrastructure and policies for high-quality, secure, and compliant data.

3) Technology Stack:

Implementing a scalable MLOps platform to manage the end-to-end model lifecycle.

4) Talent and Organization:

Cultivating the necessary skills and structuring teams, often through a Center of Excellence (CoE).

5) Risk and Compliance:

Creating a comprehensive model for governance, ethical oversight, and regulatory adherence.

What is a Strategic AI Framework in Banking?

A strategic AI framework is a comprehensive blueprint that aligns technology initiatives with core business objectives to drive measurable transformation. It functions as a holistic organizational strategy, not merely a technology roadmap, by ensuring that every AI investment is purposeful and delivers value.

A strategic AI framework transforms artificial intelligence from a series of isolated projects into a core business capability that drives competitive advantage.

The framework’s primary goal is to provide a structured, repeatable process for deploying AI solutions. This is achieved by:

a) Defining Business Alignment:

Each AI initiative must be tied to a specific key performance indicator (KPI), such as revenue growth, operational efficiency, or risk reduction.

b) Establishing Data Readiness:

It confirms that foundational data infrastructure, governance policies, and quality standards are in place before projects begin.

c) Standardizing Technology:

It guides the selection of scalable tools and platforms that support the entire machine learning lifecycle, from development to deployment and monitoring.

d) Structuring the Organisation:

It defines roles, responsibilities, and team structures, like an AI Center of Excellence, to build and manage AI capabilities effectively.

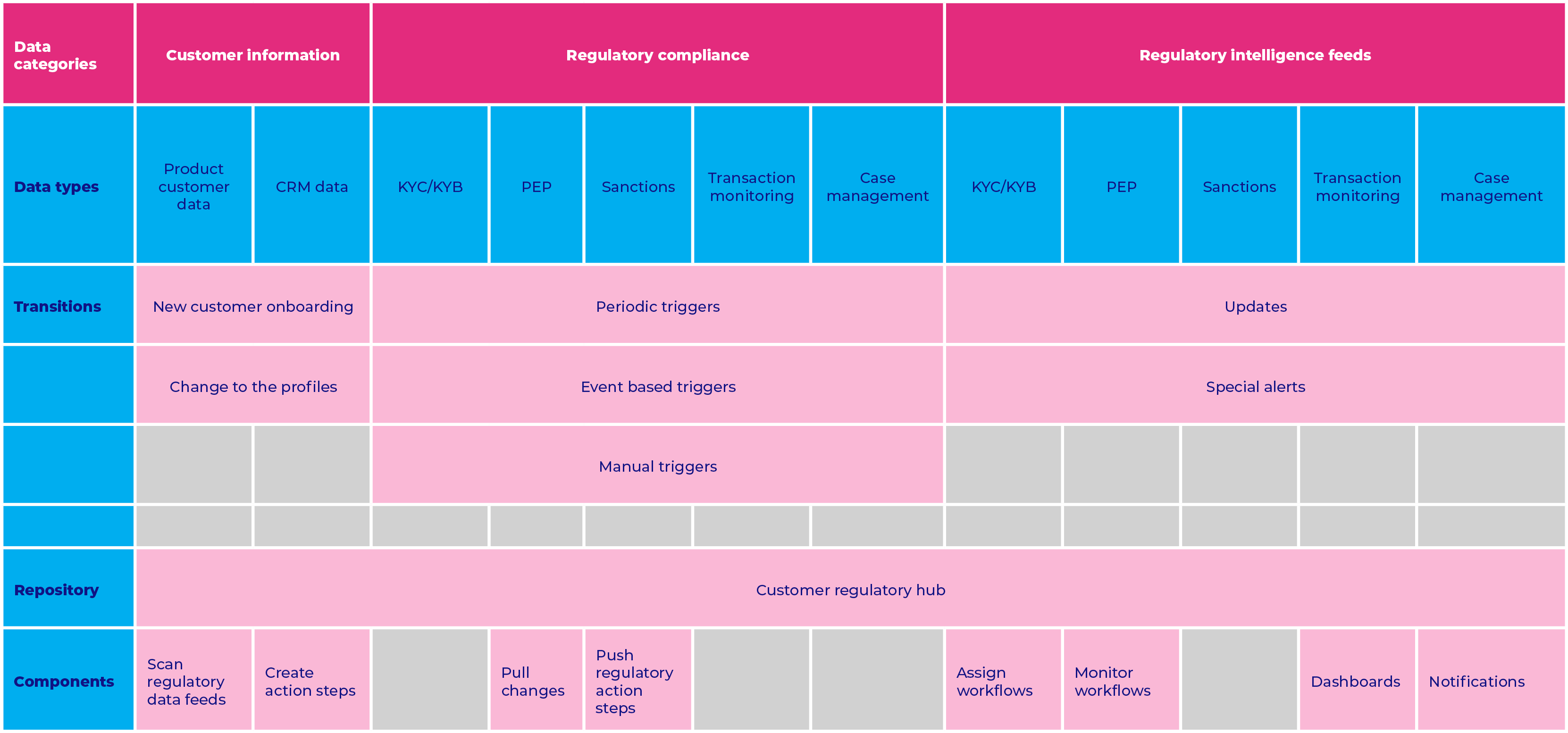

Architecting Data Governance for AI Success

CIOs architect data governance for AI success by establishing a centralized framework that treats data as a strategic, governable enterprise asset. Effective data governance is critical for regulatory compliance, model accuracy, and building trustworthy AI systems.

Implementation requires several key components:

i) Governance Council:

A central body responsible for setting data quality standards, access policies, and usage protocols across the institution.

ii) Data Lineage and Traceability:

The ability to track data from its source to its use in models, which is essential for audits and model explainability.

iii) Unified Data Platform:

A data fabric or similar architecture that provides secure, democratized access to clean and reliable data for analytics and machine learning teams.

iv) Compliance and Privacy:

Clear processes for managing data privacy, user consent, and adherence to regulations like GDPR and CCPA.

Practical Considerations

1) Risk of Poor Data:

Without robust governance, AI initiatives are likely to fail due to poor data quality, introducing model bias and generating unreliable outcomes.

2) Investment:

Establishing strong data governance is a significant undertaking that requires sustained investment in technology, processes, and personnel.

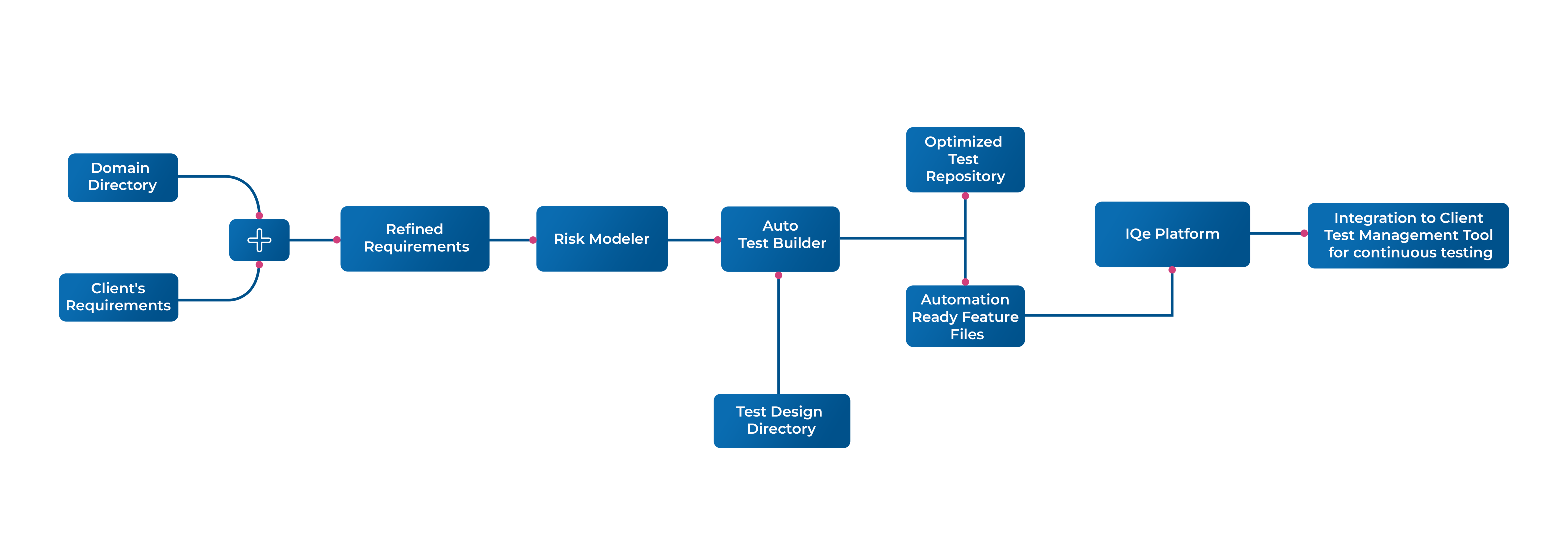

Core Components of a Scalable AI Technology Stack

A scalable AI technology stack consists of integrated components that automate and manage the end-to-end machine learning lifecycle. This stack enables banks to develop, deploy, and maintain AI models efficiently, reliably, and at scale.

The MLOps platform is the core of a modern AI stack, providing the automation and orchestration necessary to move models from experiment to production reliably.

The stack is typically composed of four distinct layers:

a) Infrastructure Layer:

A hybrid cloud infrastructure provides flexible and scalable computing power and storage for training and hosting models.

b) Data Layer:

Features data lakes, warehouses, and real-time streaming platforms (e.g., Kafka) to ingest and process large volumes of structured and unstructured data.

c) MLOps Platform:

The central MLOps platform automates and standardizes model development, training, deployment, and monitoring using tools for version control (Git) and containerization (Docker, Kubernetes).

d) Application Layer:

Includes the final AI models and APIs that integrate with business systems, such as fraud detection engines, credit scoring models, or customer service bots.

How to Cultivate AI Talent and Organizational Skills

Banks cultivate the necessary AI talent through a dual strategy of internal development and external acquisition. This approach builds a sustainable talent pipeline and embeds AI expertise across the organization.

i) Upskilling and Reskilling:

Invest in training programs for existing technology and business teams in data science, machine learning engineering, and data analytics.

ii) AI Center of Excellence (CoE):

Establish a dedicated AI Center of Excellence (CoE) to centralize expertise, define best practices, and mentor talent across business units.

iii) External Recruitment:

Attract top-tier external talent by offering technology-focused career paths and fostering a culture of innovation that rivals tech companies.

iv) Strategic Partnerships:

Collaborate with universities and specialized training firms to create a pipeline of new talent with relevant, up-to-date skills.

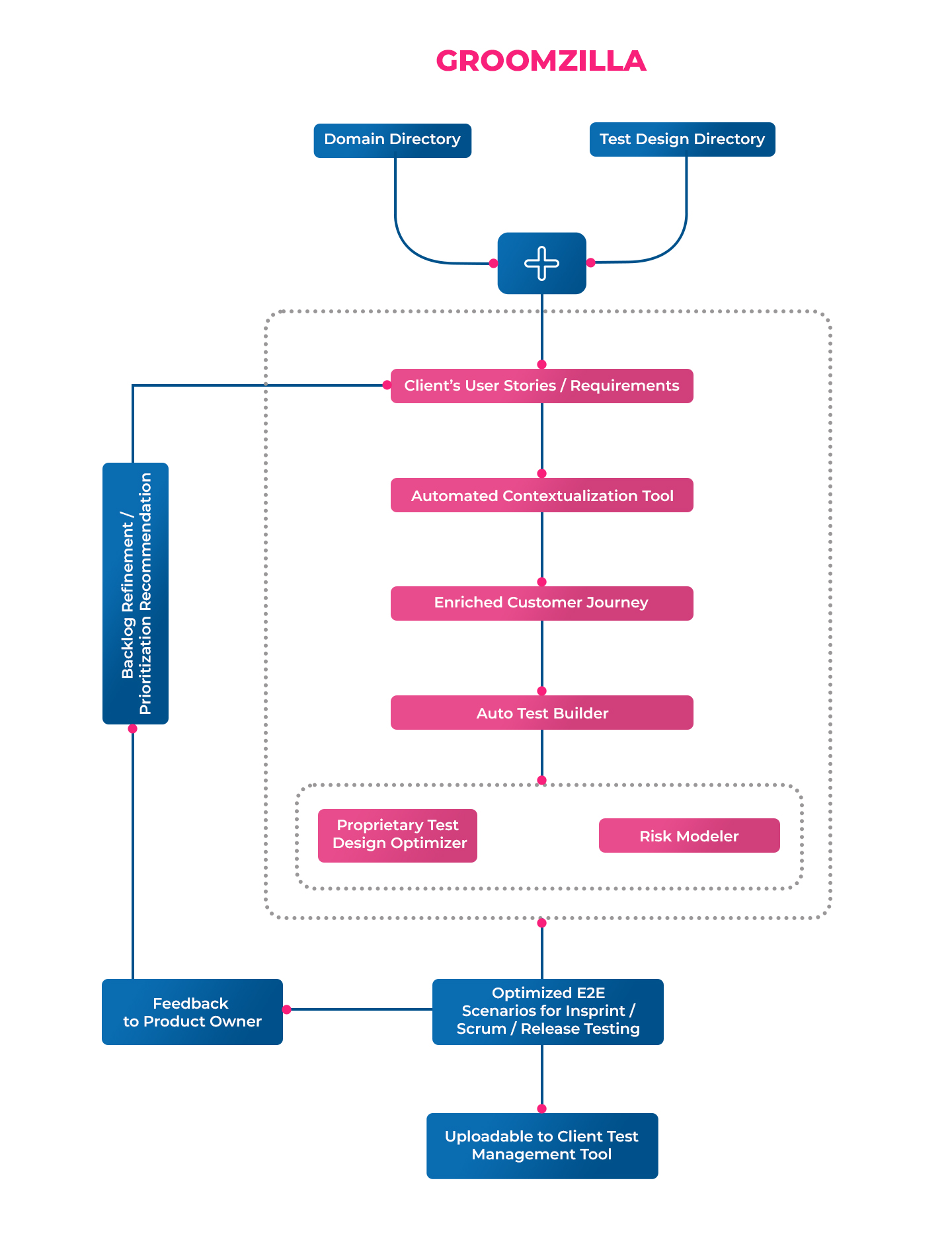

The Function of a Center of Excellence in Scaling AI

An AI Center of Excellence (CoE) functions as the central governing and enabling body for scaling AI initiatives across a financial institution. Its primary role is to transition the organization from siloed, ad-hoc AI projects to a unified, strategic program that maximizes ROI.

The CoE has three main responsibilities:

1) Standardization and Governance:

It establishes and enforces standards for tools, methodologies, and governance frameworks to ensure consistency, quality, and compliance.

2) Enablement and Support:

It acts as an internal consultancy, providing deep expertise and technical support to business units as they develop and deploy their own AI solutions.

3) Innovation and Strategy:

It researches emerging AI technologies, identifies high-value use cases, and manages the enterprise AI project portfolio to ensure alignment with strategic goals.

Ensuring AI Model Compliance and Governance

Financial institutions ensure AI models are compliant and governed by implementing a rigorous AI risk management framework that operates throughout the model’s entire lifecycle. This extends traditional model risk management (MRM) to address the unique challenges of machine learning, such as bias and explainability.

Key elements of this framework include:

a) Formal Validation Process:

A mandatory review process that assesses model fairness, bias, explainability, and robustness before any model is deployed.

b) Human-in-the-Loop (HITL) Systems:

For critical decisions, especially those with significant customer or financial impact, a human must provide final oversight and approval.

c) Continuous Monitoring:

Automated tools that continuously track model performance, data drift, and algorithmic bias once a model is in production.

d) Comprehensive Documentation:

Meticulous records of a model’s development, testing, validation, and operational performance to satisfy regulatory requirements.

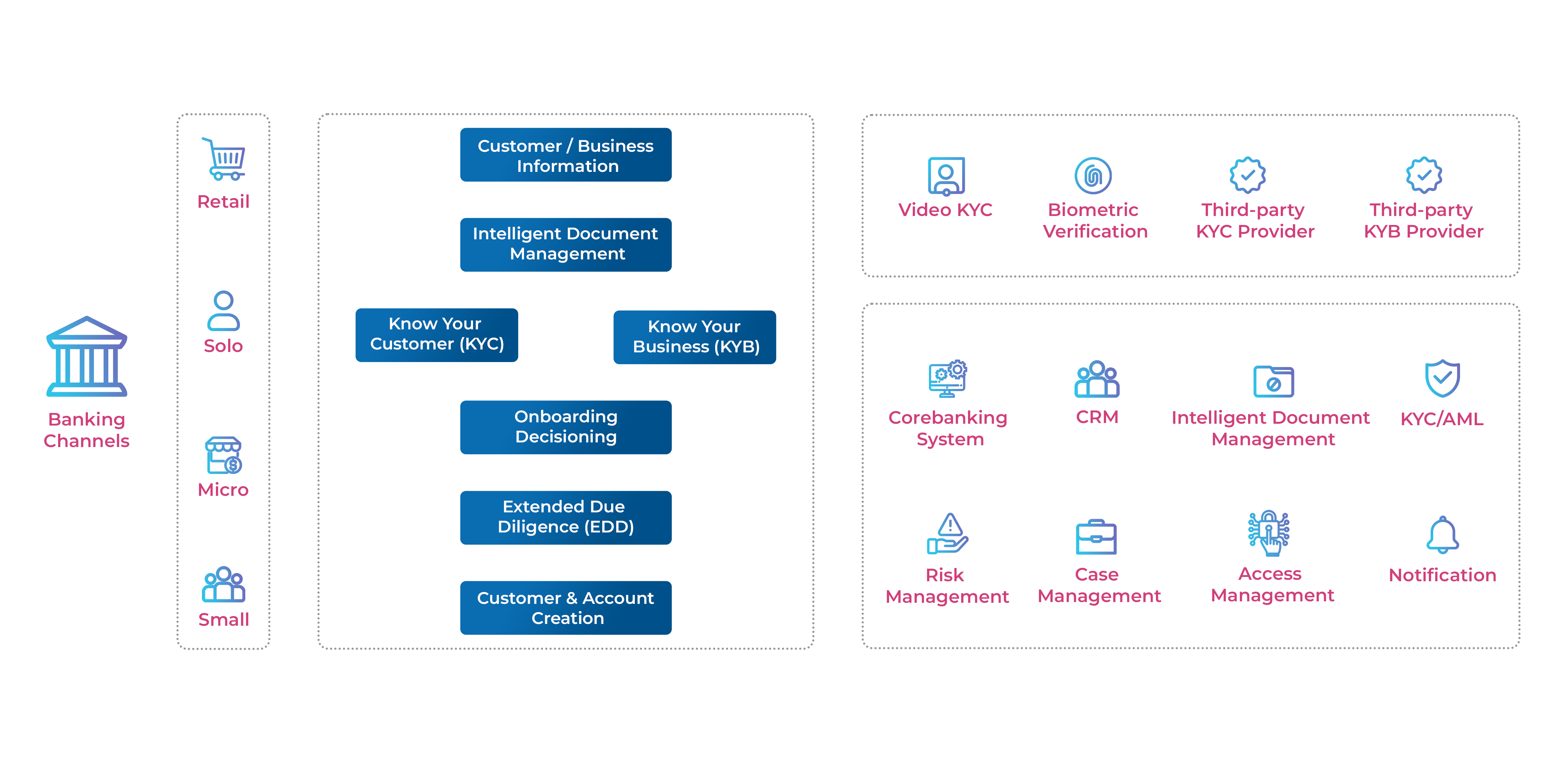

Key Use Cases for AI in Banking Operations

Key use cases for AI for financial analysis and operations create measurable value by enhancing efficiency, reducing risk, and improving customer experiences.

i) Operational Automation:

AI streamlines back-office processes such as Know Your Customer (KYC) checks, document processing, and compliance reporting, which reduces costs and minimizes human error.

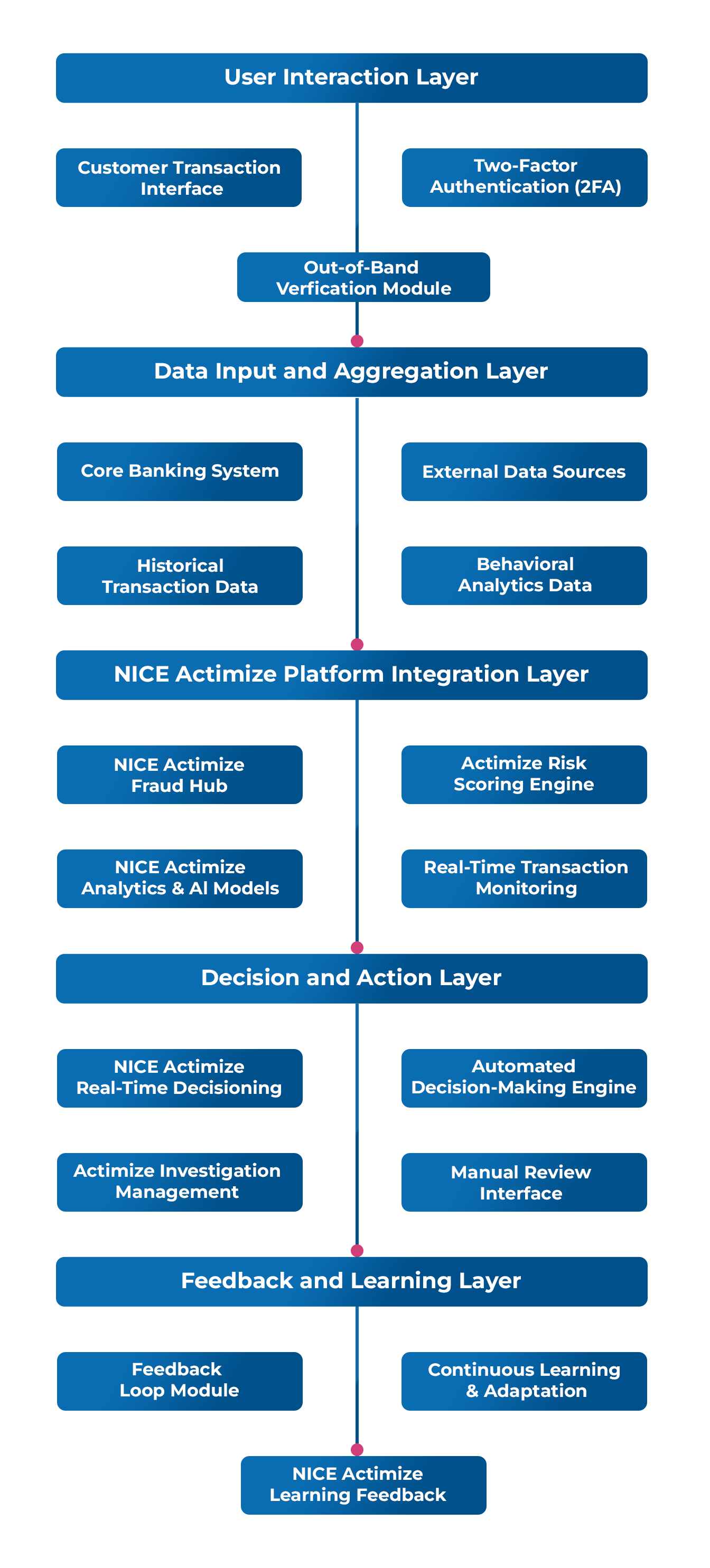

ii) Fraud Detection:

Machine learning algorithms analyze transaction patterns in real-time to identify and block fraudulent activity with greater speed and precision than rule-based systems.

iii) Credit Scoring and Risk Assessment:

AI models analyze vast datasets to produce more accurate credit scoring and risk assessment , leading to better lending decisions.

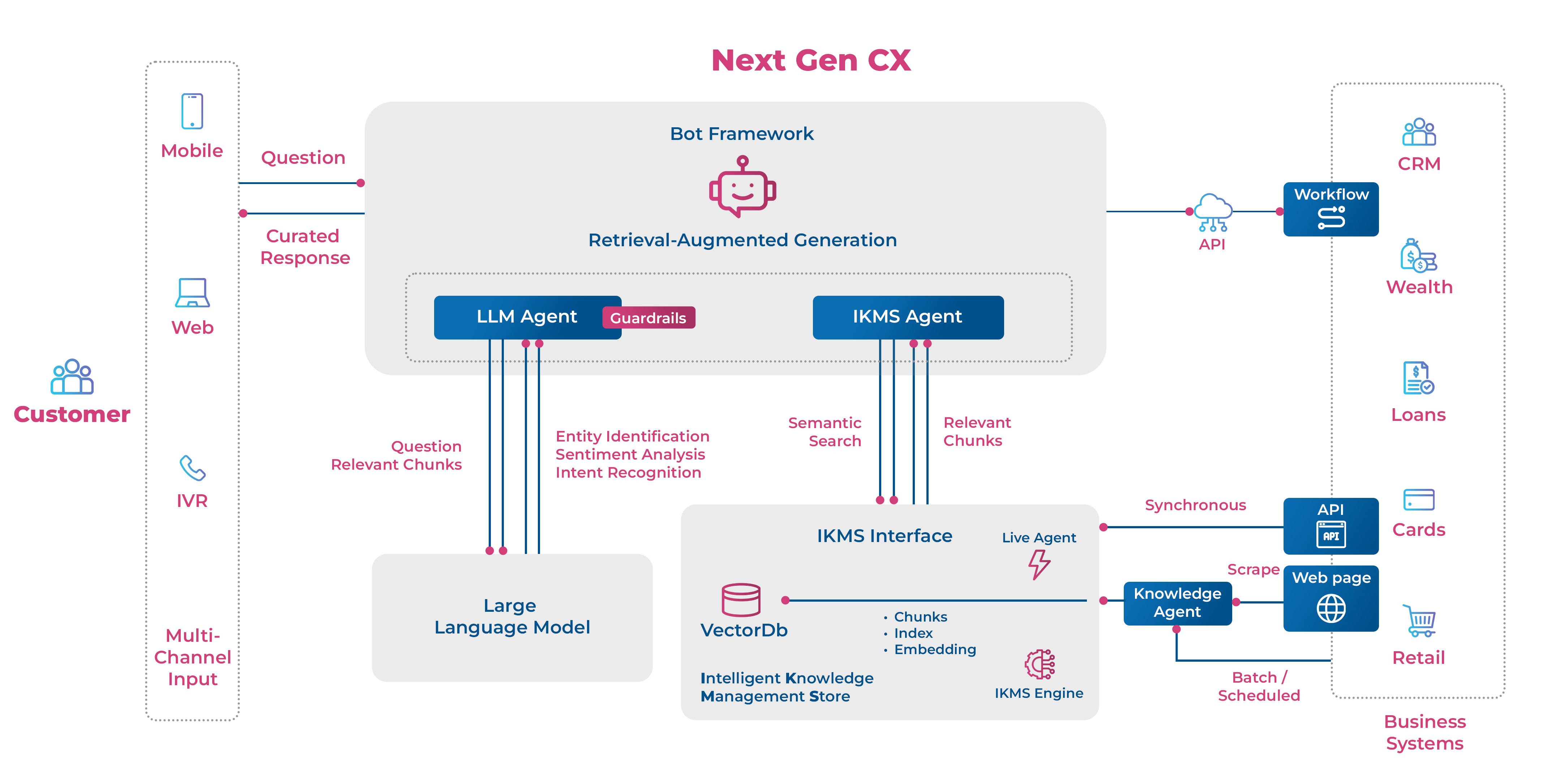

iv) Customer Service:

AI-driven chatbots and virtual assistants provide 24/7 support for common inquiries, freeing human agents to handle more complex issues.

v) Algorithmic Trading:

In investment banking, AI is used to identify market patterns and optimize trading strategies and portfolio management.

Frequently Asked Questions (FAQ)

What is the typical timeframe for implementing an enterprise AI framework?

The typical timeframe for implementing a foundational enterprise AI framework is 12 to 24 months. This period covers establishing data governance, deploying the core technology stack, and forming an initial Center of Excellence.

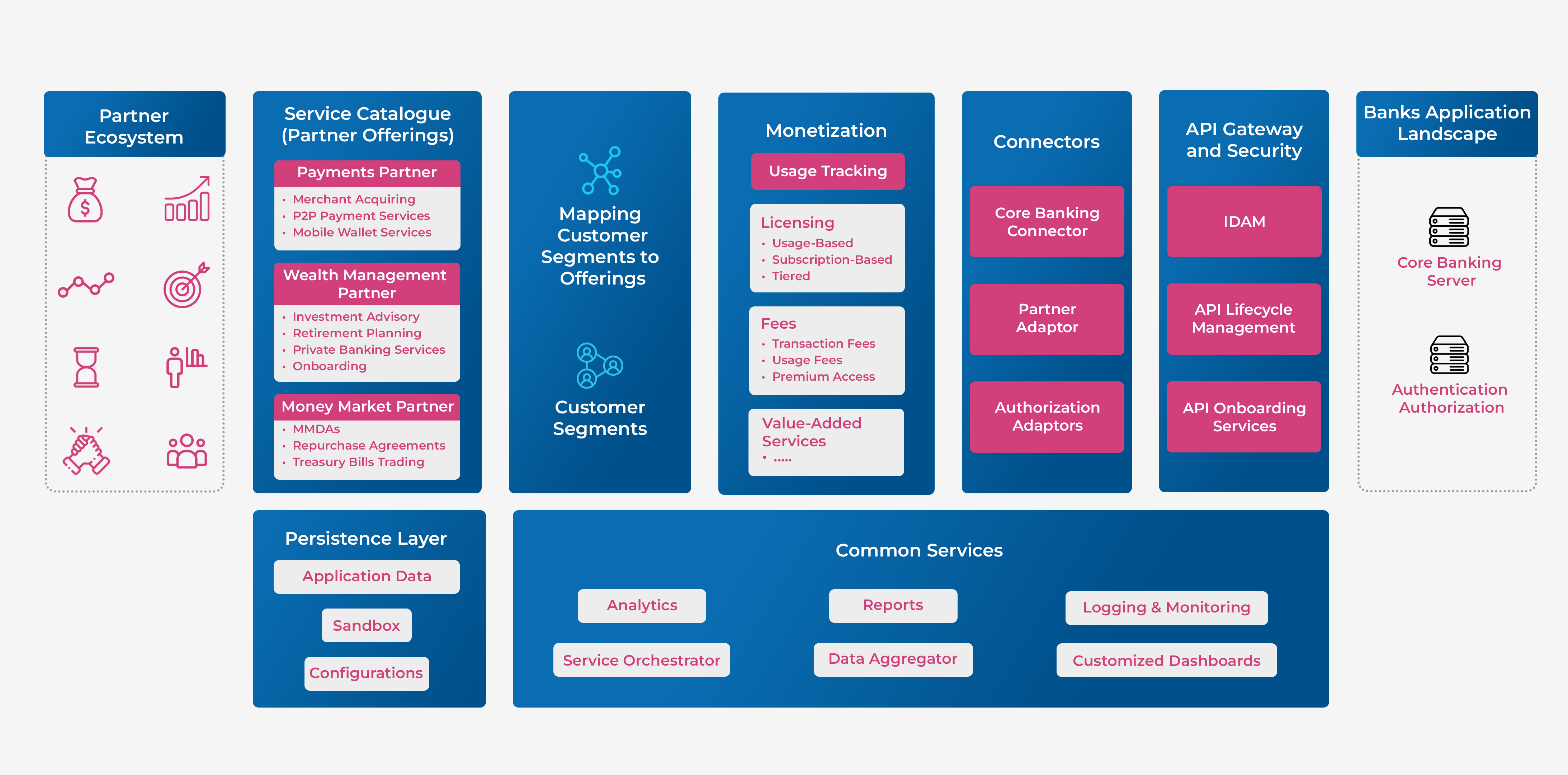

Can smaller banks adopt the same AI framework as large institutions?

Yes, smaller banks can adopt the same principles of the framework, but the implementation must be scaled to their resources. They may rely more on third-party cloud platforms and managed services instead of building large in-house teams and infrastructure.

How does an AI framework address data privacy and security?

A robust AI framework integrates data privacy and security by design. It accomplishes this by enforcing strict data access controls, using data anonymization and encryption, and mandating regular audits to ensure compliance with regulations like GDPR.

What is the difference between AI governance and general IT governance?

AI governance expands on IT governance to manage risks unique to machine learning, such as algorithmic bias, model explainability, and data drift. Its focus is on the lifecycle and ethical implications of automated decision-making systems.

Should a bank build its own AI models or use third-party solutions?

The decision depends on the use case and internal capabilities. Banks should build custom models for proprietary functions that create a competitive advantage (e.g., unique risk models), while using proven third-party solutions for common tasks (e.g., document analysis).