Banking is entering a new phase of AI adoption. What began as generative assistants supporting knowledge work is now evolving toward supervised autonomy, systems that can plan steps, use tools, and execute defined workflows. This shift from advice to action fundamentally changes the operating model. At the same time, expectations around resilience, third-party dependency, transparency, recordkeeping, and human oversight are tightening. As AI starts planning and acting, the question is no longer whether banks can deploy it, but whether they can govern and control it at scale.

1. Autonomous AI agent orchestration vs traditional automation — what’s fundamentally different, and why it matters now?

Traditional automation (BPM/RPA) relies on predefined, deterministic workflows. Processes are designed in advance, executed step by step, and handle exceptions through fixed rules. RPA bots mimic human actions across systems to perform repetitive, rules-based tasks. This works well for stable, predictable processes, but it is tightly constrained by what has been scripted and by the reliability of user interfaces and upstream systems. When conditions change or new scenarios appear, automation typically breaks or requires redesign.

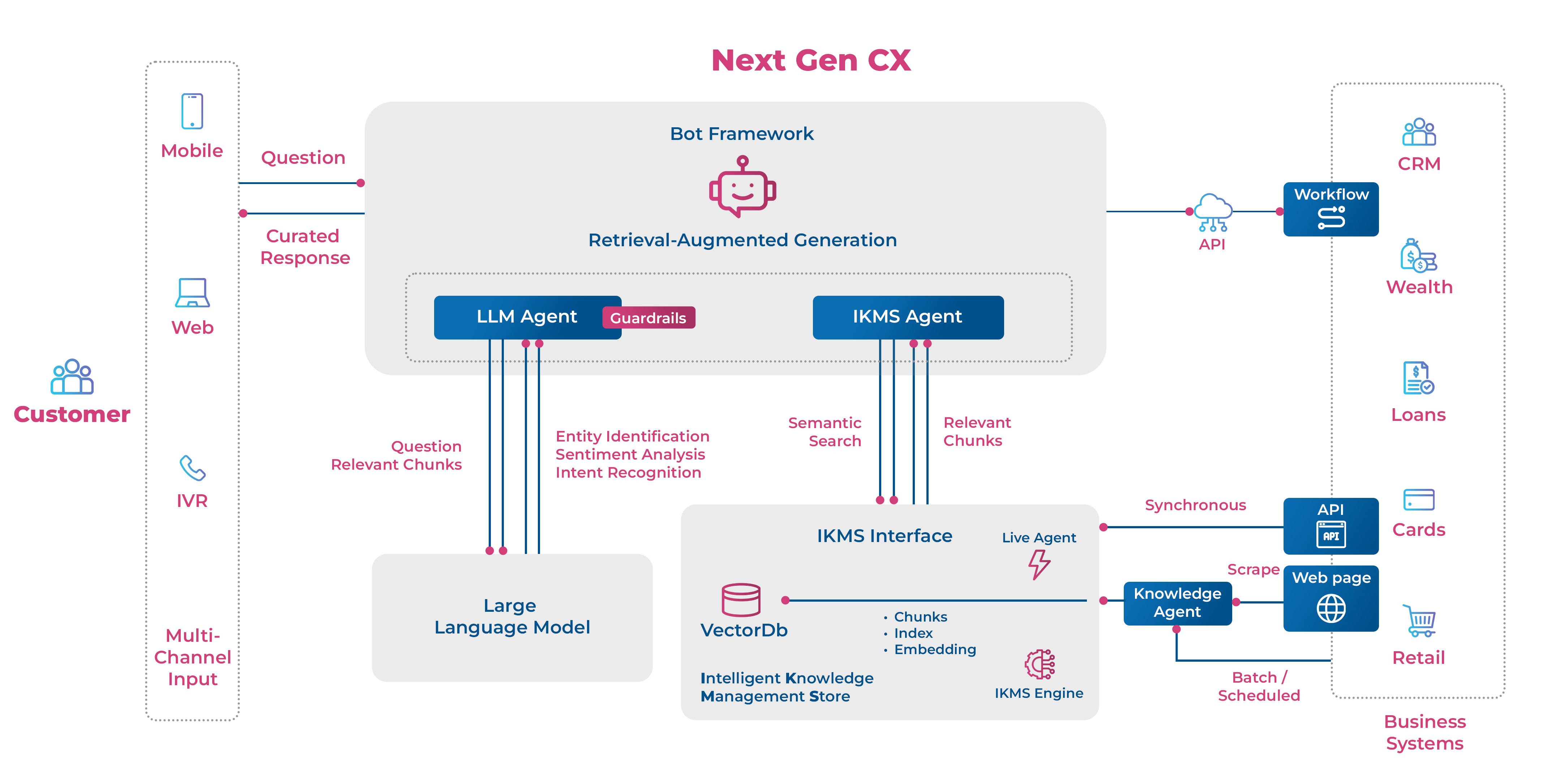

Autonomous agent orchestration works differently. It is goal-driven rather than step-driven. Instead of following a fixed path, AI agents can plan, choose actions, use tools, and adjust their approach as they learn more about the situation. Modern LLM-based agents can coordinate multiple specialized agents and adapt dynamically when the environment changes. Techniques such as “reason-and-act” allow the system to interleave thinking with actions like querying data or calling tools, improving resilience and completion in complex, real-world workflows where the path cannot be fully defined upfront.

Why the distinction matters now in banking is that autonomy is colliding with two fast-moving realities:

First, supervisory and policy frameworks are hardening around high-risk uses such as credit decisioning, requiring disciplined data quality, logging/traceability, documentation, robustness/cybersecurity, and human oversight.

Second, financial authorities are increasingly explicit that AI can introduce or amplify vulnerabilities (eg, third-party concentration, cyber risk, governance gaps), and have stressed both on rapid acceleration of adoption and the need to close information gaps and strengthen governance and monitoring conditions that become even more critical when AI can take actions, not just generate text.

2. Why end-to-end autonomous execution rarely scales in banking? What structural barriers are preventing autonomous AI from scaling in BFS, and what must fundamentally change?

Banks have moved beyond pilots for GenAI-style copilots, but end-to-end autonomous execution is rare because banking is a high-control, high-audit domain where “doing” is harder than “suggesting.” Supervisors themselves highlight that monitoring AI use is still early and complicated by information gaps, while vulnerabilities span governance, data quality, model risk, cyber risk, and third-party concentration. The barriers are structural, not just technical, and they tend to cluster into five interlocking constraints:

- Governance was built for models, Not for Autonomous Actors: Most enterprise governance frameworks assume a static model that can be developed, approved, validated, and monitored in isolation. Agentic AI challenges this assumption. Its behavior emerges from prompts, policies, tool access, memory, and multi-step planning, often distributed across multiple agents and evolving over time. This makes traditional concepts like validation, effective challenge, and evidence harder to apply. To govern agentic systems, organizations must shift focus from certifying individual models to controlling dynamic systems: defining clear ownership, enforcing action boundaries, logging decisions, and ensuring every action remains explainable, auditable, and accountable.

- Data fragmentation and weak process observability. Operational resilience frameworks increasingly require mapping of critical operations and dependencies; if you cannot reliably map and observe the “as-is” chain of people, processes and systems, you cannot safely let an agent traverse it.

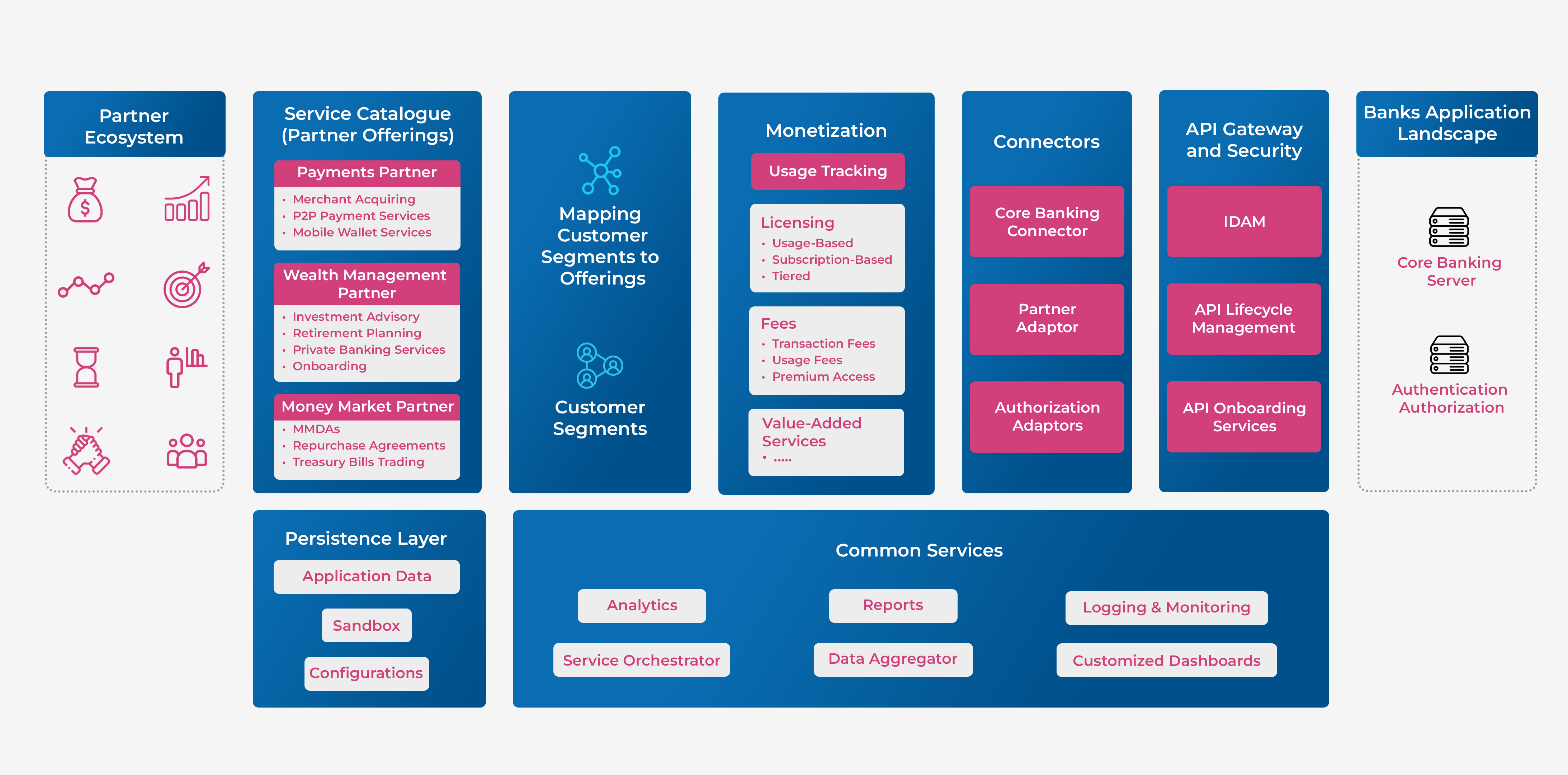

- Third-party dependency and concentration constraints. End-to-end AI workflows depend on a growing ecosystem of cloud platforms, model providers, external data sources, and AI tools. This creates third-party dependency and concentration risk, especially when critical processes rely on a small set of providers. Banks must retain clear ownership, ensure strong documentation and oversight, and actively manage concentration risk so autonomy does not weaken operational resilience or control.

- Control and Evidence define the Limits of Autonomy: Autonomous execution only works if every action can be explained, evidenced, and audited. Organizations must be able to show what the system did, why it acted, what data it used, and under which approvals. As AI takes on execution roles, autonomy becomes less about model capability and more about control design, recordkeeping, and traceability—turning governance and data architecture into the true enablers of safe, scalable autonomy.

- Operational Resilience and Safe Failure by Design: Disruptions are no longer treated as exceptions but as expected events. Autonomous agents may introduce new failure modes, misused tools, cascading actions, or misinterpreted policies, that can amplify impact if unmanaged. As AI executes workflows, resilience must be engineered into the system itself, with defined tolerances, controlled fallbacks, and tested recovery paths. Safe autonomy depends on designing agents to fail predictably, contain damage, and recover within acceptable limits.

What must fundamentally change to unlock scale is not simply “more pilots,” but an agent operating model that makes execution governable: Banks need an “autonomy control plane” that enforces least-privilege tool access, segregation of duties, and audit-grade traceability; aligns validation to behavior (end-to-end outcomes, drift monitoring, failure thresholds) rather than just static model metrics; and integrates third-party resilience and exit planning as first-class design constraints, consistent with model risk, outsourcing, and operational resilience frameworks.

3. Is legacy architecture really the biggest barrier?

From today’s AI adoption lens, legacy systems are rarely the primary constraint. Most banks have already modernized enough to expose APIs, integrate data layers, and deploy AI pilots. The real bottleneck is not infrastructure—it’s operational confidence. Agentic AI shifts from insight to action.

The challenge is trusting AI to execute across domains where data ownership, risk controls, and process accountability are fragmented. Even modern architectures struggle if workflows lack clear decision rights, clean data lineage, and real-time observability.

Legacy becomes a blocker only when it prevents safe execution—no rollback mechanisms, weak access controls, or poor event traceability. But in most cases, the bigger hurdle is aligning technology, risk, and operating models to support controlled autonomy.

4. When AI begins executing workflows end-to-end, how should accountability and control be redefined?

When AI executes workflows end-to-end, accountability shifts from prediction oversight to action accountability. Control must move beyond model validation to governing decisions, actions, and their real-world impact. In practical terms, the definition of “control” expands into three enforceable layers.

a) Engineered Human Oversight:

Human supervision must be built into the system—real-time monitoring, clear escalation triggers, defined override rights, and the ability toimmediatelypause or stop autonomous actions.

b) End-to-End Auditability:

Every action must be traceable: what was done, why, using which data, under what authority. Logs, decision trails, and approval checkpoints must be tamper-evident and reviewable.

c) Full-Chain Validation:

Governance mustvalidatenot just the model, but orchestration logic, tool permissions, escalation rules, and live outcome behavior to ensure autonomy operates within defined risk tolerances

In practice, banks are moving toward tiered autonomy:

- Tier 1 (Assist): draft, summarize, recommend (human executes)

- Tier 2 (Guarded act): agent executes low-risk steps (e.g., fetch docs, populate forms) with strict logging

- Tier 3 (Controlled autonomy): end-to-end execution with approvals at defined gates (e.g., credit decision exceptions, KYC remediation)

- Tier 4 (High autonomy): rare in BFS; requires very strong assurance, continuous monitoring, and containment design

5. Where does Agentic AI create the most tangible economic impact in banking – cost, risk, or revenue?

In today’s banking landscape, the most tangible and measurable impact of Agentic AI is in cost and productivity. Internal workflows such as operations, servicing, onboarding, and investigations are easier to control and scale. Here, AI delivers operating leverage by reducing manual effort, shortening cycle times, and improving throughput. This is where banks are already seeing defensible ROI.

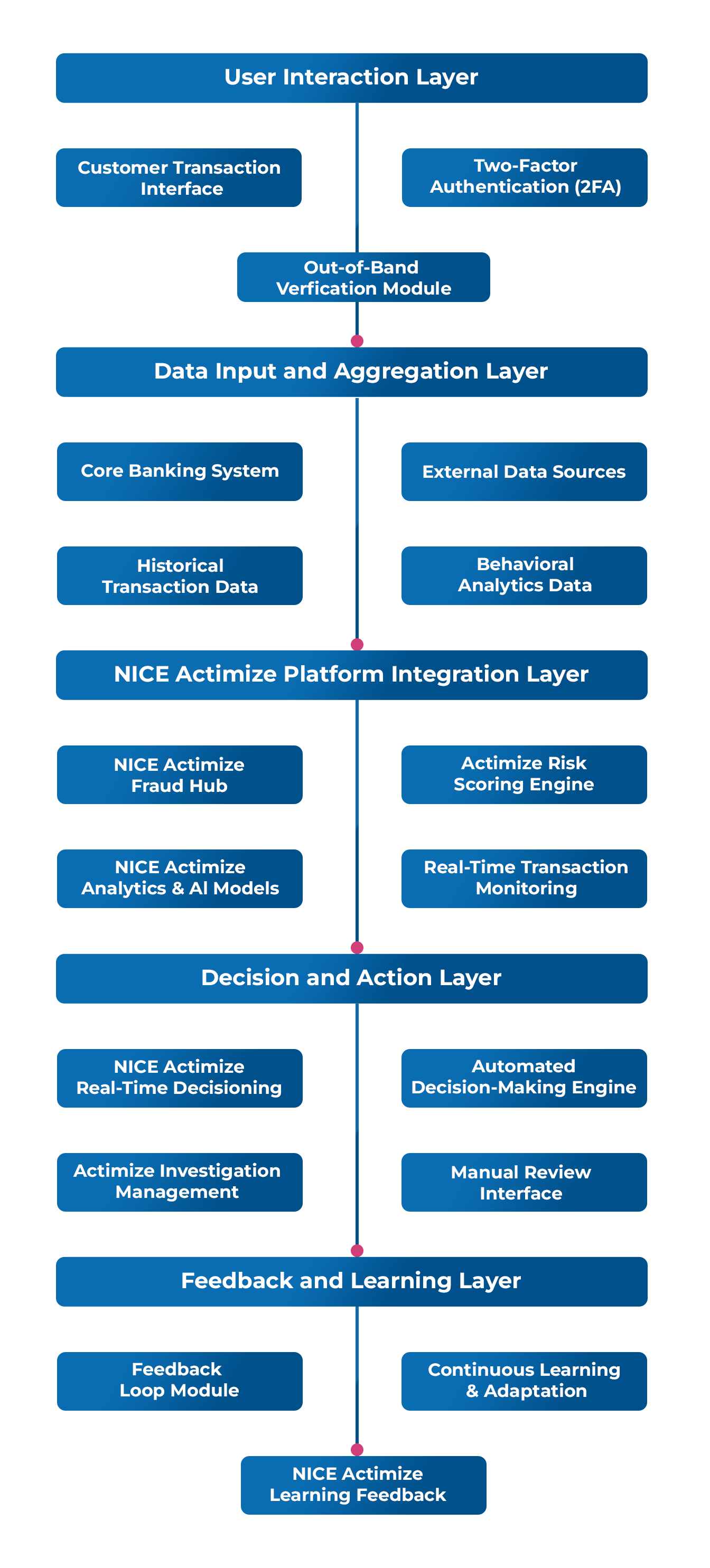

Risk impact is the next major value driver. In domains like AML and fraud, AI materially reduces false positives, accelerates case resolution, and strengthens detection quality. The economic value appears as lower investigation costs, faster containment, and improved compliance posture.

Revenue growth is significant but slower to unlock. Client-facing autonomy requires stronger controls, regulatory confidence, and proven fairness at scale. When achieved, it can enhance personalization, speed product decisions, and improve cross-sell effectiveness—but only after trust and governance maturity are established.

In short, the trajectory for Agentic AI in banking is being set by a convergence: rapid capability expansion in multi-step AI systems, rising supervisory focus on governance and resilience, and growing policy infrastructure for high-risk AI. The winners are likely to be banks that treat Agentic AI orchestration as a regulated operating capability designed for traceability, validation, resilience, and safe shutdown, and not as “better automation”.

About The Author

Rajesh is the Senior Vice President and head of the Practice Group at Maveric. He promotes best practices and methodologies in project delivery through playbook adoption and building contextual solutions, driving talent acquisition and creating leadership bandwidth. Rajesh is also responsible for skill development in Delivery teams. With over two decades of experience in the IT industry, he works with various CXOs, the Program Office and our customer bank’s business teams to understand their challenges in ongoing delivery operations to develop solutions and accelerators. Rajesh’s leadership skills have played a vital role in identifying and developing the top talent to accelerate Maveric’s vision of becoming one of the top three bank tech specialists.

Rajesh is the Senior Vice President and head of the Practice Group at Maveric. He promotes best practices and methodologies in project delivery through playbook adoption and building contextual solutions, driving talent acquisition and creating leadership bandwidth. Rajesh is also responsible for skill development in Delivery teams. With over two decades of experience in the IT industry, he works with various CXOs, the Program Office and our customer bank’s business teams to understand their challenges in ongoing delivery operations to develop solutions and accelerators. Rajesh’s leadership skills have played a vital role in identifying and developing the top talent to accelerate Maveric’s vision of becoming one of the top three bank tech specialists.