Let me tell you about a conversation I had last month with a Chief Risk Officer at a mid-sized bank.

His team had just deployed an AI-powered loan decisioning system. Six months of development, millions in investment, cutting-edge machine learning models. It worked brilliantly in testing. Customer applications that used to take 3-4 days were approved in minutes. The executive team was thrilled.

Then, three weeks after launch, a journalist asked a question: “Can you explain why my loan was denied when someone with similar credentials was approved?”

The Chief Risk Officer couldn’t answer. The data science team couldn’t answer. The AI mode -trained on years of historical data- made a decision but no one could explain why.

The system was pulled offline within 48 hours. The reputational damage? Still being calculated.

This wasn’t just a technology failure, but a governance failure.

And if you’re reading this, you will probably want to avoid finding yourself in the same situation

The $200 Billion Question Nobody’s Asking

The headlines are intoxicating. According to McKinsey, generative AI could add between $200-340 billion annually to bank earnings. Every conference keynote promises AI-powered transformation. Your competitors are rushing to deploy chatbots, fraud detection systems, and robo-advisors.

But what those predictions don’t tell you: Only 11% of financial services executives have fully implemented responsible AI capabilities.

While 92% of companies are increasing AI investments, nine out of ten are doing it without proper governance frameworks for data quality, model testing, and third-party risk management.

That’s not bold innovation. That’s building skyscrapers without foundations.

Why “Move Fast and Break Things” Doesn’t Work in Banking

The urgency is real. When your competitors are deploying AI chatbots and you’re still waiting for the legal team to finish reviewing the governance framework, it feels like you’re bringing a knife to a gunfight.

But what Silicon Valley’s favorite motto ignores: In banking, when things break, ordinary people end up getting hit the most.

three very expensive scenarios:

Scenario 1: The Bias Time Bomb

Your AI hiring tool, trained on 10 years of historical data, systematically rejects qualified candidates from certain neighborhoods. You discover this during a regulatory audit. The fine? $50 million. The class-action lawsuit? Another $200 million. The talent you lost? Incalculable.

Scenario 2: The Compliance Catastrophe

Your fraud detection AI flags legitimate transactions from certain demographic groups at 3x the rate of others. You’ve accidentally built a digital redlining system. The Office of the Comptroller of the Currency (OCC) is not pleased. Your CEO is testifying before Congress.

Scenario 3: The Data Breach Nightmare

Your third-party AI vendor, which you trusted to handle customer data because “everyone uses them,” suffers a breach. Millions of records compromised. The average cost of data breach in financial services in 2024? $4.88 million. Your brand’s trustworthiness? gone.

The Myth That’s Costing You Money

The biggest misconception about responsible AI frameworks: they slow you down.

This is expensive thinking.

Take the earlier example DBSBank,, Singapore’s largest lender. The bank started its AI journey in 2014 with governance built in from day one. Its framework? Four principles they call PURE:

- Purposeful: Every AI use case has a clear business objective

- Unsurprising: No black-box decisions; outcomes are explainable

- Respectful: Customer privacy and data ethics are non-negotiable

- Explainable: Every decision can be traced and justified

The result? DBS now runs hundreds of AI models in production, from customer service to fraud detection. They’re moving ahead because of governance.

The DBS Approach: Humans in the Loop

DBS’ philosophy is simple: AI as a co-pilot, not autopilot.

Their Implementation:

- Senior-level AI committee reviews every use case for legal and ethical compliance

- Cross-functional squads of AI professionals embedded across business units

- Organization-wide AI literacy training because responsible AI isn’t just the data team’s job

- Real-time monitoring for every AI decision in production

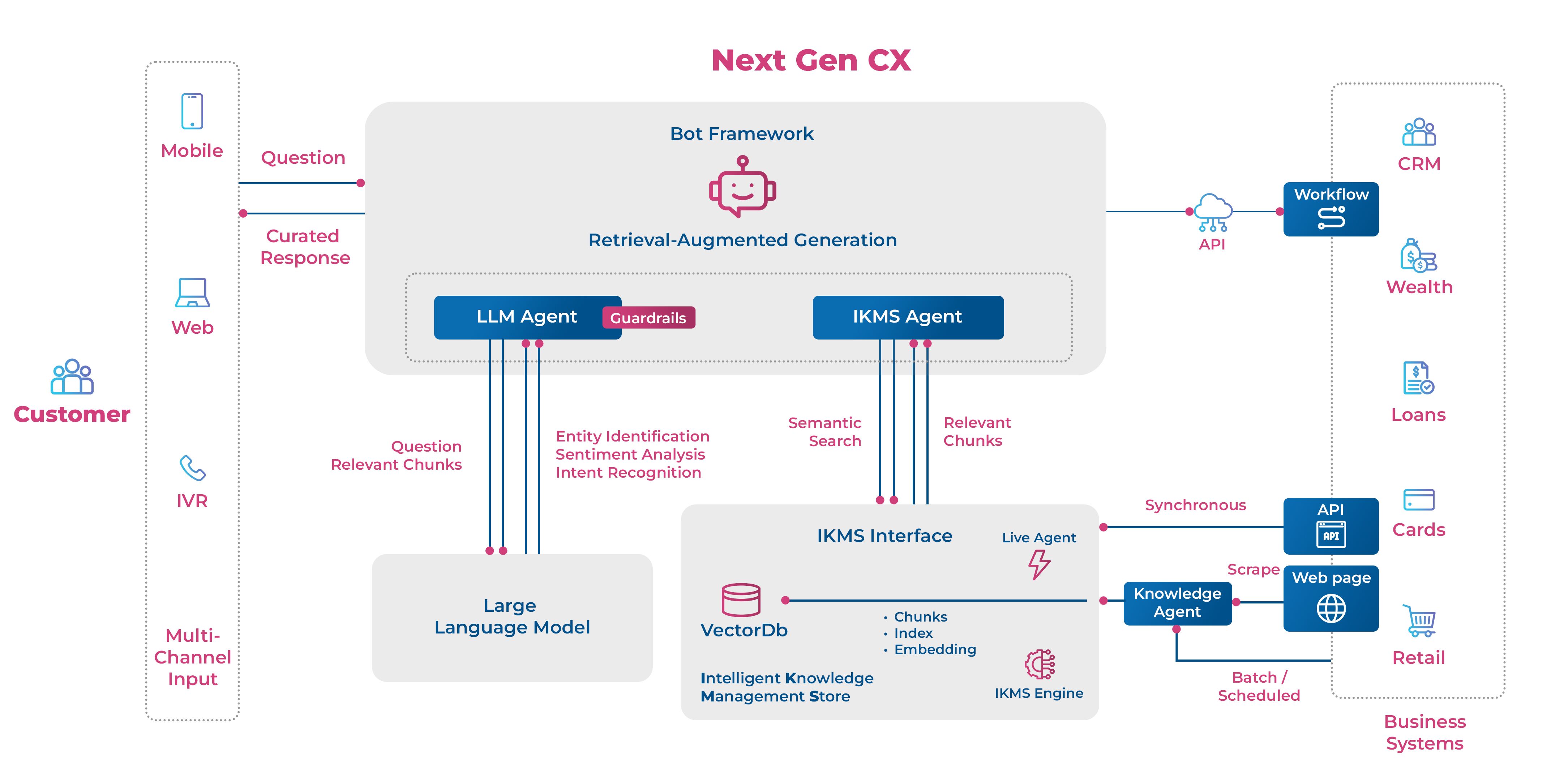

The Result: DBS has deployed generative AI tools like their CSO Assistant, which helps customer service officers by transcribing conversations in real-time, retrieving information instantly, and suggesting responses, all while maintaining human oversight and decision-making authority.

The Real Cost of Getting This Wrong

a) Regulatory Reality:

The regulatory landscape is changing fast. Legislative mentions of AI have increased ninefold since 2016, jumping by more than a fifth across 75 countries. The EU AI Act is already in force. The U.S. OCC has updated its guidance on model risk management specifically for AI systems. India’s RBI has released its Framework for Responsible AI in Financial Services.

b) Reputation Risk:

Customers expect banks to protect not just their money, but their data and privacy. One AI mishap, and that trust evaporates. In an era where customers can switch banks with a few taps on their phone, trust is your only moat.

c) Investment Risk:

Organizations that neglect proper governance frameworks often find their AI investments underperforming significantly. In the worst cases, these investments deliver virtually no business value whatsoever.

You’re not saving money by skipping governance. You’re deferring and multiplying your costs.

The Regulatory Frameworks You Need to Know

United States:

- SR 11-7 Guidance: Model Risk Management framework extended to AI/ML systems

- OCC Updates (2024): Emphasis on human accountability and transparent model documentation

- Focus: Explainability, validation, and independent oversight

European Union:

- EU AI Act (2024): World’s first comprehensive AI regulation

- EBA Guidelines: Machine learning in risk-based models

- Focus: High-risk AI categorization, transparency, human oversight

United Kingdom:

- FCA-PRA AI Principles (2023-2024): Governance standards for financial services

- Focus: Explainable outcomes, traceable data lineage, bias mitigation

India:

- RBI Framework for Responsible AI (2024): Mandatory explainability and auditability

- Gopalakrishnan Committee Recommendations: Transparency and consent-driven operations

- Focus: Consumer protection and systemic trust

Singapore:

- MAS AI Guidelines (2024): Risk management and governance frameworks

- Focus: Data governance and ethical AI deployment

The Frameworks That Deliver Results

The EY Responsible AI Framework: Three Domains, Nine Attributes

EY has developed a comprehensive framework that builds control across:

Three Governance Domains:

- Purposeful Design: Embedding ethics and safety from conception

- Agile Governance: Flexible frameworks that evolve with technology

- Vigilant Supervision: Continuous monitoring and accountability

Nine Trust Attributes:

- Accountability

- Reliability

- Fairness

- Data Protection

- Security

- Transparency

- Compliance

- Sustainability

- Explainability

Financial institutions using this framework have successfully deployed AI at scale, keeping both regulatory compliance and customer trust intact.

How to Implement Without Grinding to a Halt

“Okay, this sounds great, but we have 17 AI projects in various stages of development. How do we implement governance without shutting everything down?”

Here’s the pragmatic approach:

Phase 1: Risk Assessment (Week 1-2)

Not all AI is created equal. A chatbot that answers FAQs isn’t the same risk as a model making a $100 million lending decisions.

Create a Risk Matrix:

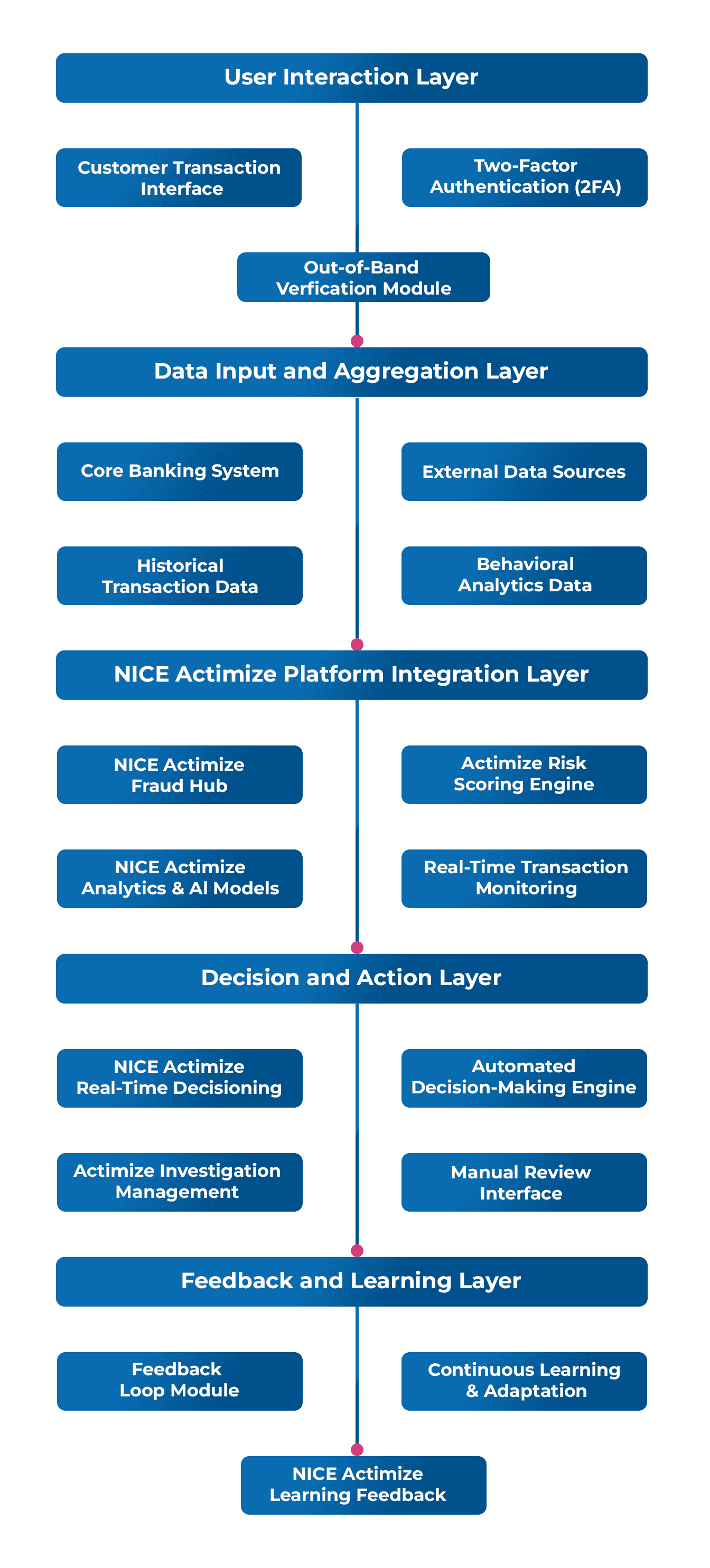

- High Risk: Credit decisioning, fraud detection, employee hiring, customer pricing

- Medium Risk: Customer service chatbots, document processing, predictive analytics

- Low Risk: Internal productivity tools, data summarization, research assistants

Prioritize governance for high-risk systems first.

Phase 2: Quick Wins (Week 3-4)

Implement the basics that deliver immediate value:

Explainability Dashboard: For every AI decision, capture: What data was used? What factors mattered most? What alternative outcomes were considered? Can a human review and override?

Bias Testing Protocol: Run your models through these tests: Demographic parity, Equal opportunity, Predictive parity. If you’re seeing significant differences across protected groups, you have a problem.

Human Oversight Mechanism: Every high-stakes AI decision needs a human checkpoint. Not to rubber-stamp, but to genuinely review and override when necessary.

Phase 3: Parallel Roadmaps (Ongoing)

Don’t choose between innovation and governance. Do both simultaneously with parallel roadmaps.

Innovation Track: Your data scientists continue building, experimenting, and pushing boundaries.

Governance Track: Your compliance and risk teams develop frameworks, testing protocols, and monitoring systems.

Integration Points: Regular synchronization meetings where innovation meets governance before anything hits production.

Phase 4: Continuous Monitoring

AI models don’t stay static. Data drifts. Populations change. What worked six months ago might be dangerously biased today.

Implement Continuous Monitoring:

- Model Performance Tracking: Are accuracy rates declining?

- Bias Monitoring: Are outcomes becoming skewed over time?

- Data Quality Checks: Is your training data still representative?

- Regulatory Compliance Scans: Are you still meeting evolving requirements?

The Real-World ROI of Responsible AI

DBS Bank:

- Hundreds of AI models in production

- Improved customer satisfaction

- Enhanced operational efficiency

- Zero major AI-related compliance incidents

- Competitive advantage: Customers trust them with AI

Organizations with Compliance-by-Design:

- ~35% reduction in audit time: Because everything is documented and explainable

- ~80% reduction in false positives: Well-governed models perform better

- Zero regulatory penalty risk: Compliance is built in, not bolted on

- 25-40% reduction in operational costs: AI actually delivers on its promises

The Pattern: Institutions that build explainability, fairness, and accountability from the outset don’t just avoid disasters—they move faster, deploy more confidently, and realize better returns on their AI investments.

Five Things You Can Do Tomorrow

Here’s your action plan for Monday morning:

1. Form an AI Governance Committee (2 hours)

- Chief Risk Officer

- Chief Data Officer

- Head of Compliance

- Chief Technology Officer

- Head of Legal

- A business unit leader (they need skin in the game)

First meeting agenda: Inventory of all AI projects and quick risk classification.

2. Implement the “Explain This” Test (30 minutes)

For every AI system currently in production or development, ask: “If a regulator, journalist, or customer asked us to explain this decision, could we?”

If the answer is no, that’s now your highest priority project.

3. Create an AI Risk Register (1 hour)

A simple spreadsheet tracking:

- AI system name

- Business purpose

- Risk classification (high/medium/low)

- Data sources

- Approval status

- Last governance review date

- Known issues

You can’t manage what you don’t measure.

4. Establish Minimum Governance Standards (2 hours)

Before any AI system goes to production, it must have:

- Documented business purpose and success metrics

- Bias testing results

- Explainability mechanism

- Human oversight protocol

- Monitoring plan

- Rollback procedure

Make this non-negotiable.

5. Start Training Your People (Ongoing)

Responsible AI isn’t just the compliance team’s job. It’s everyone’s job. From executives to data scientists to customer service reps, everyone needs basic AI literacy.

If you’ve read this far, you’re already ahead of 89% of financial services executives who haven’t implemented essential responsible AI capabilities.

Responsible AI can be your competitive moat.